TL;DR#

Large Reasoning Models (LRMs) enhance the reasoning ability of LLMs but suffer from inefficiencies in token usage, memory consumption, and inference time. This survey reviews methods designed specifically for LRMs to mitigate token inefficiency while preserving reasoning quality. It categorizes these methods into explicit compact Chain-of-Thought (CoT), which reduces tokens while keeping the explicit reasoning structure, and implicit latent CoT, which encodes reasoning steps within hidden representations instead of explicit tokens.

Beyond categorizing, the survey presents empirical analyses of existing methods, from performance and efficiency perspectives. It presents open challenges, including human-centric controllable reasoning, the trade-off between interpretability and efficiency, and ensuring the safety of efficient reasoning. The authors also highlight techniques such as model merging, new architectures, and agent routers as key to enhancing inference efficiency.

Key Takeaways#

Why does it matter?#

This survey is crucial for researchers as it addresses the growing challenge of efficient reasoning in large language models, providing a valuable guide to current methods and future directions. It highlights key challenges and potential solutions, paving the way for more practical and scalable applications.

Visual Insights#

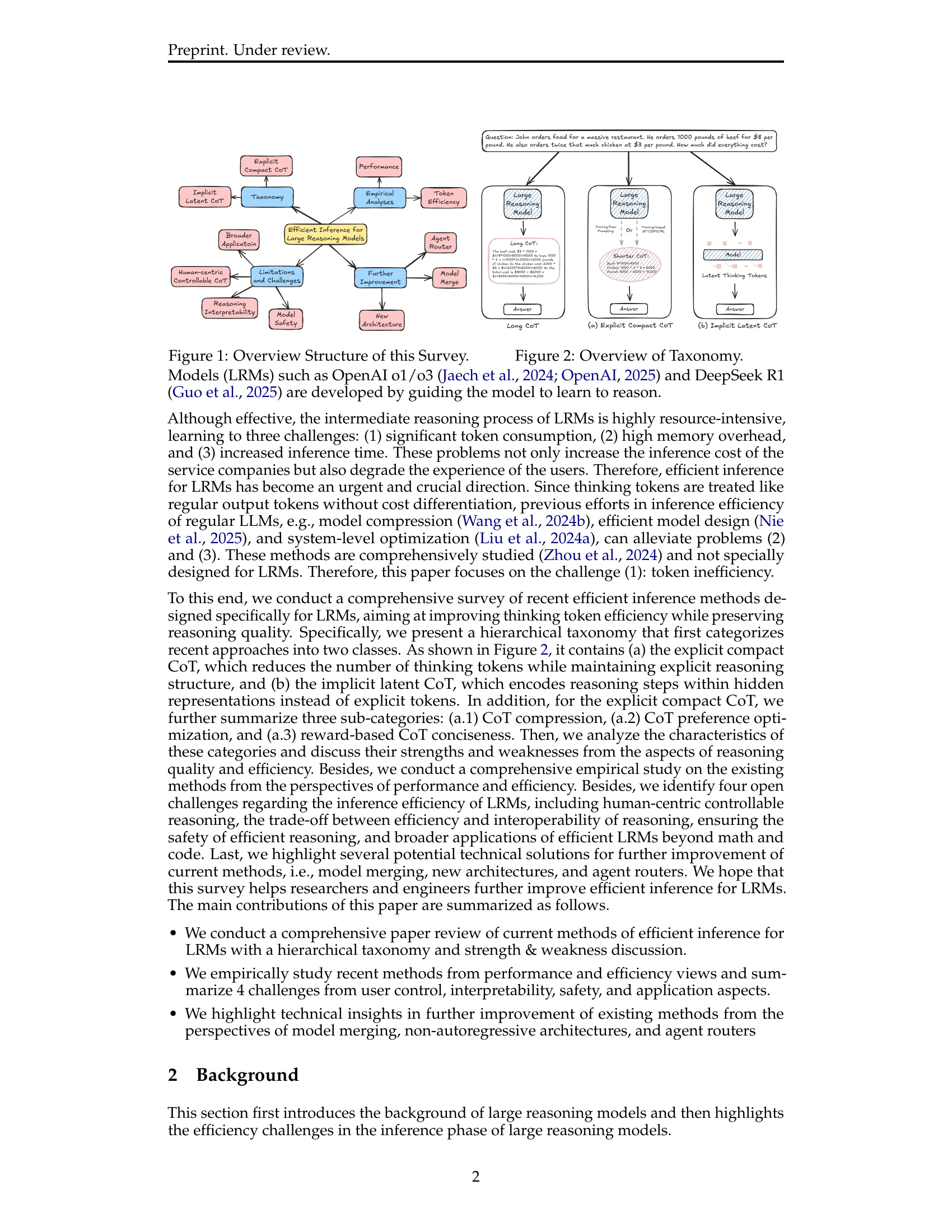

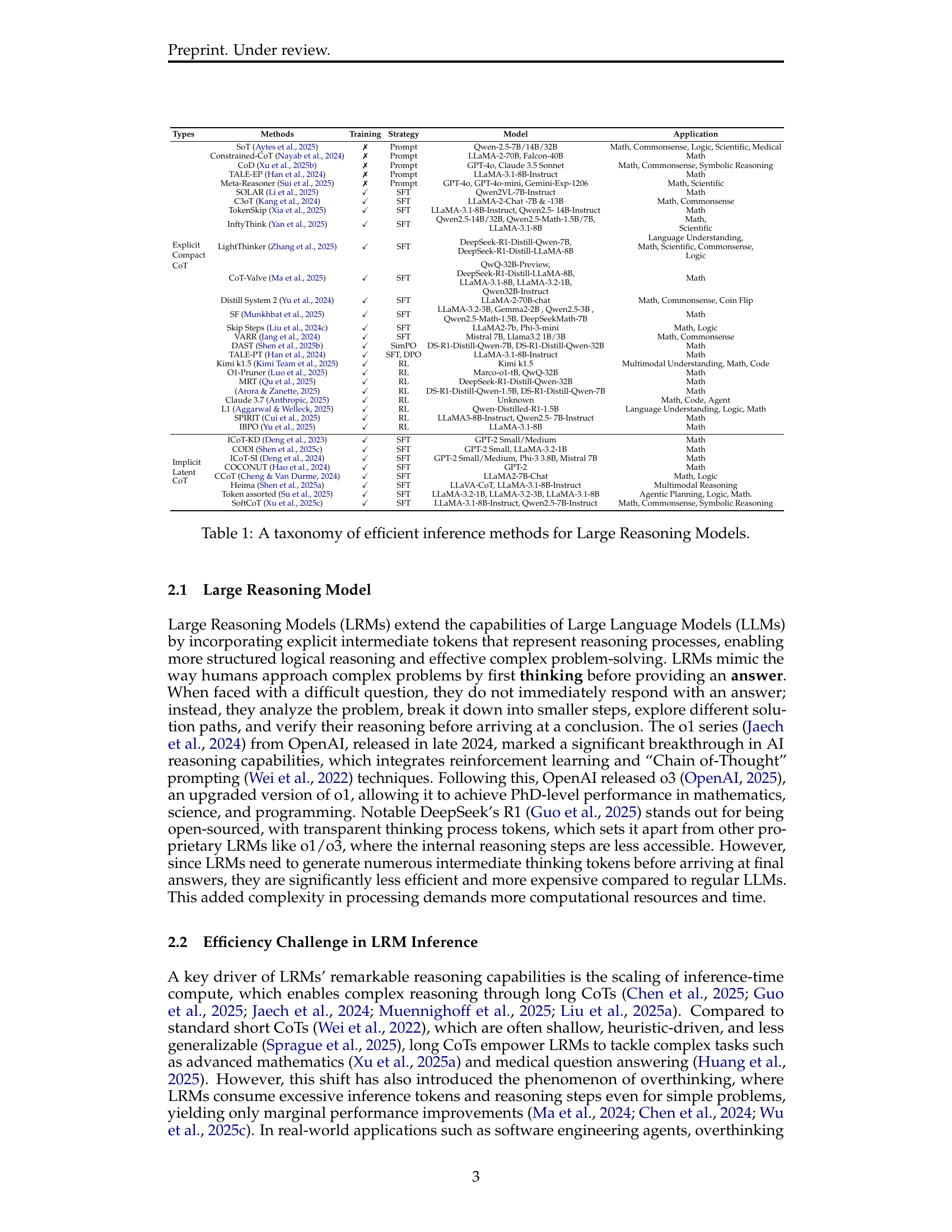

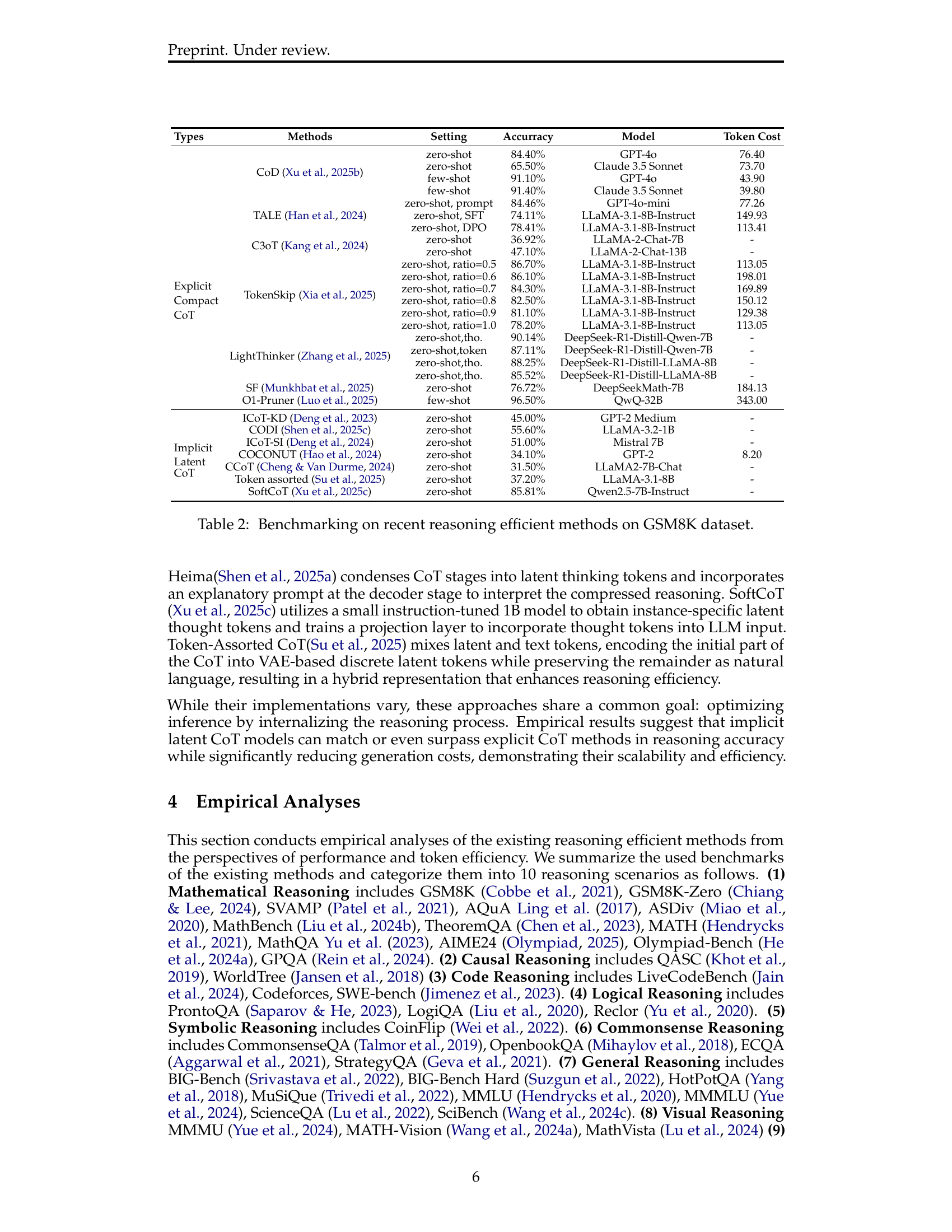

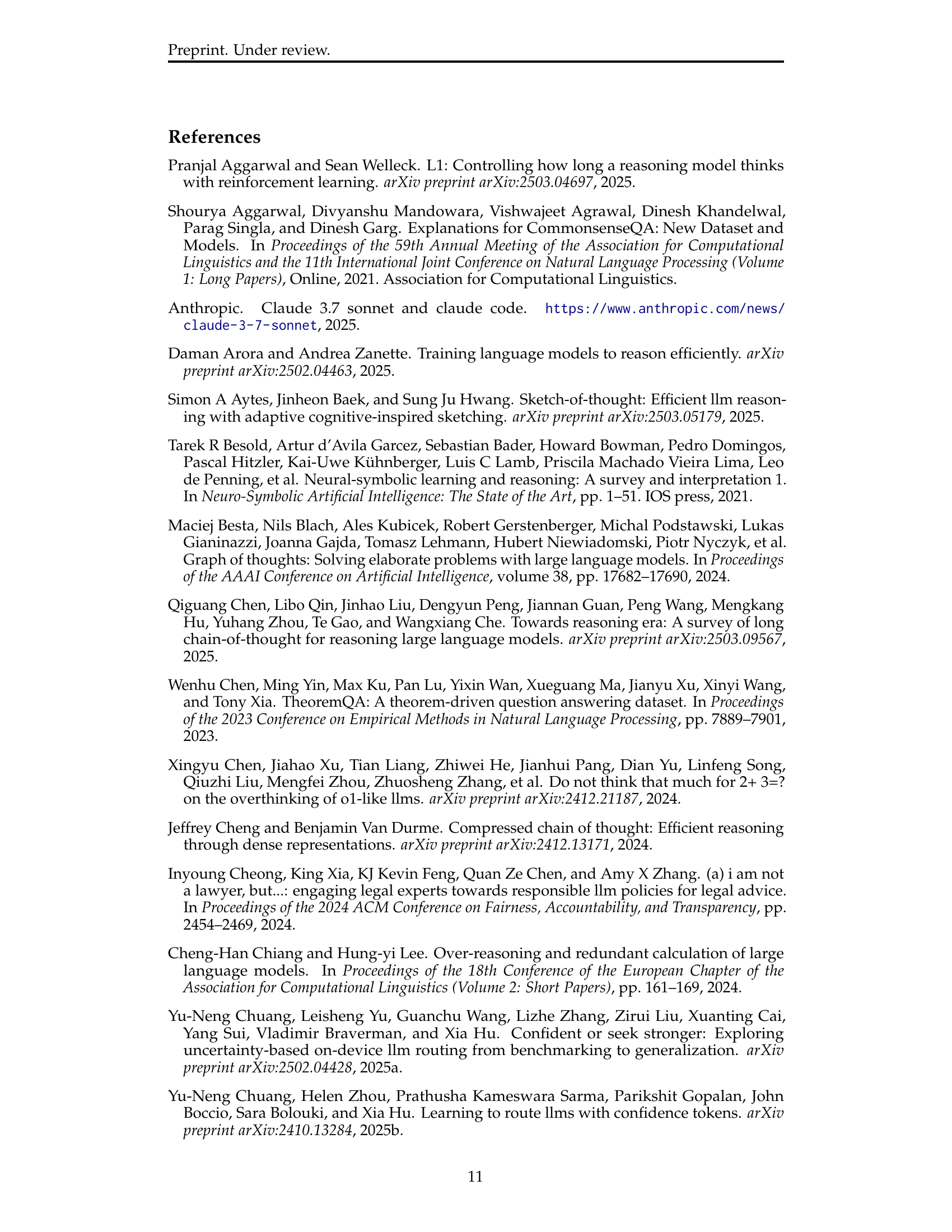

🔼 This figure provides a visual overview of the paper’s structure and the flow of topics discussed. It shows that the paper starts with an introduction to Large Reasoning Models (LRMs) and their efficiency challenges. Then, it presents a taxonomy for categorizing existing efficient inference methods for LRMs into two main types: explicit compact Chain-of-Thought (CoT) and implicit latent CoT. The paper proceeds with empirical analyses of these methods, covering both performance and efficiency aspects. Finally, it discusses open challenges and potential future improvements in the field, such as new architectures, model merging, and agent routers.

read the caption

Figure 1: Overview Structure of this Survey.

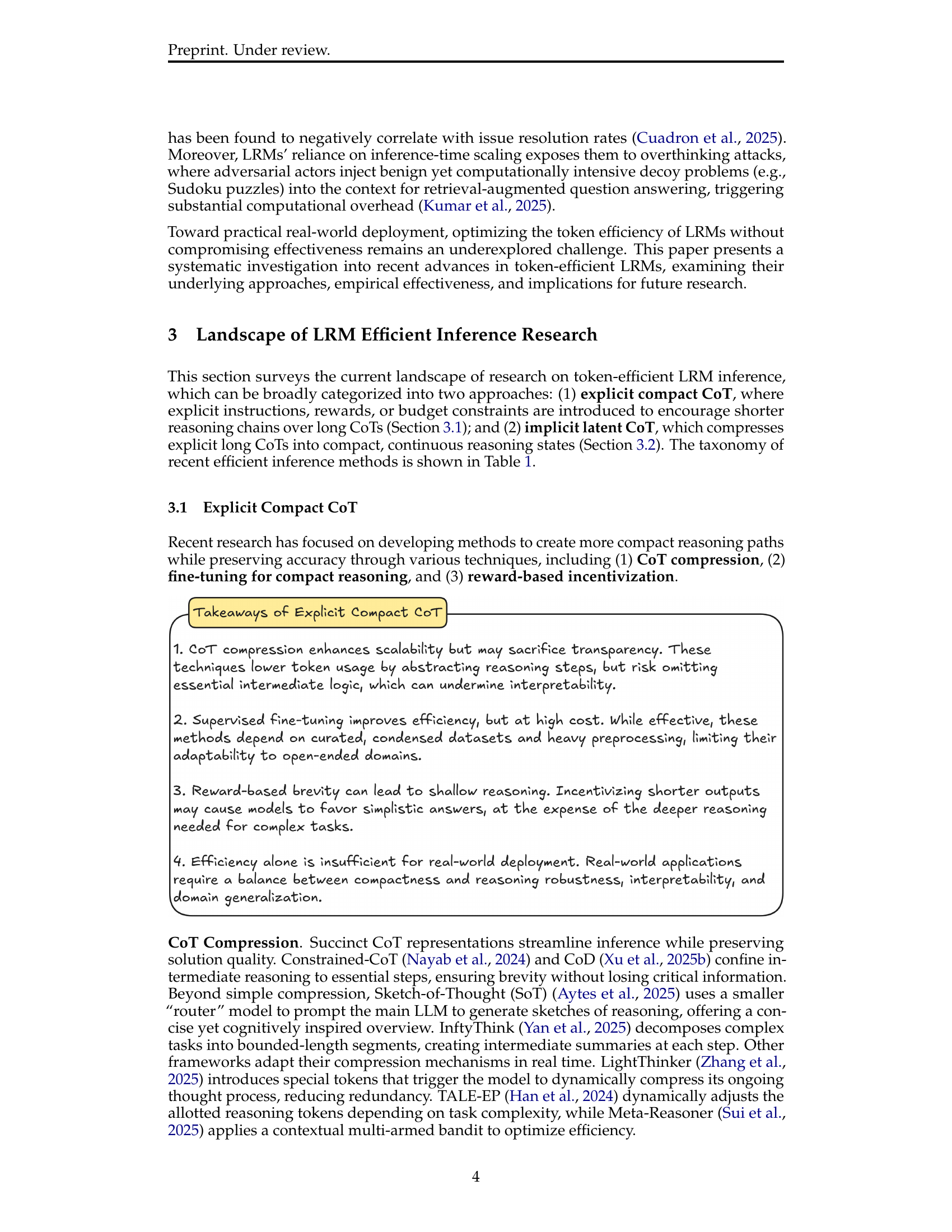

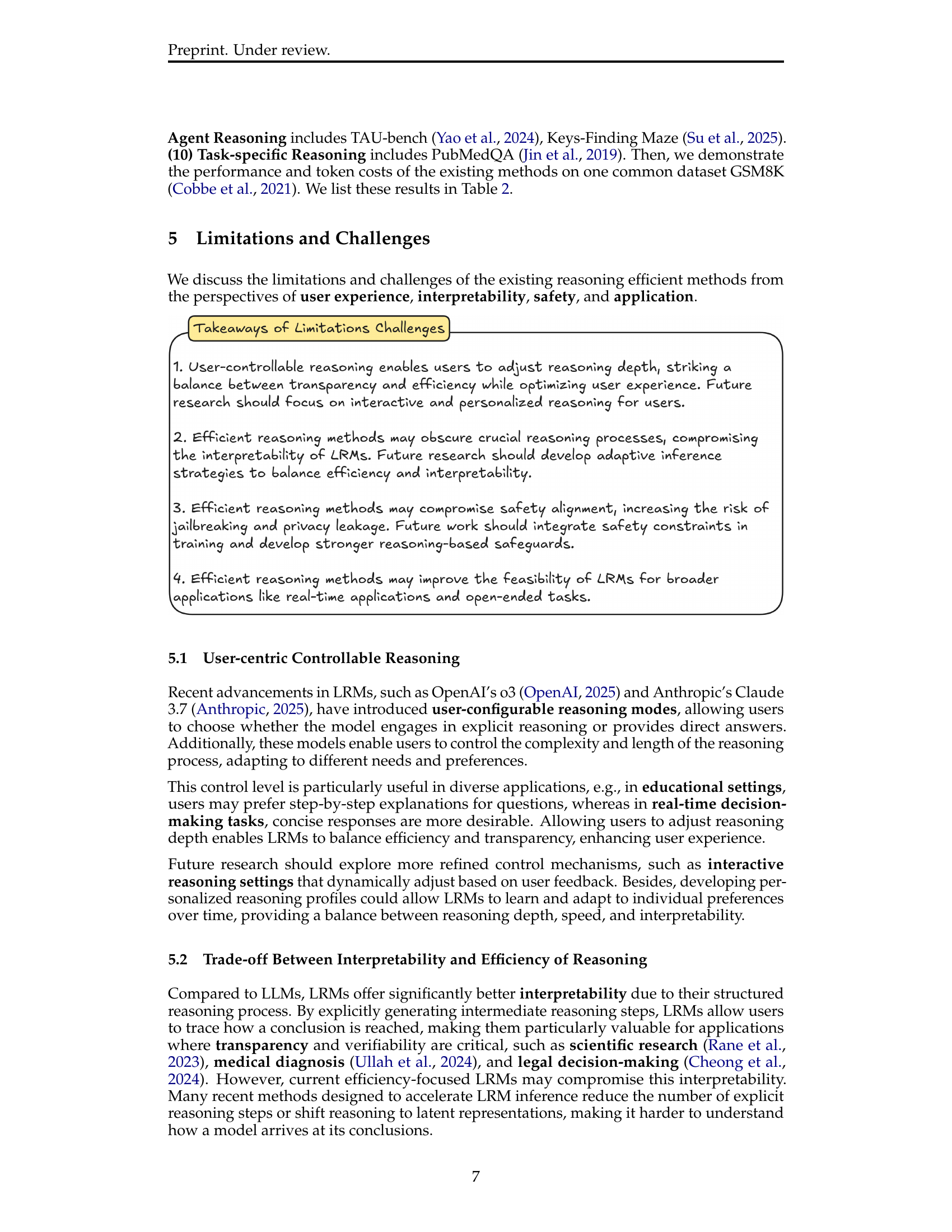

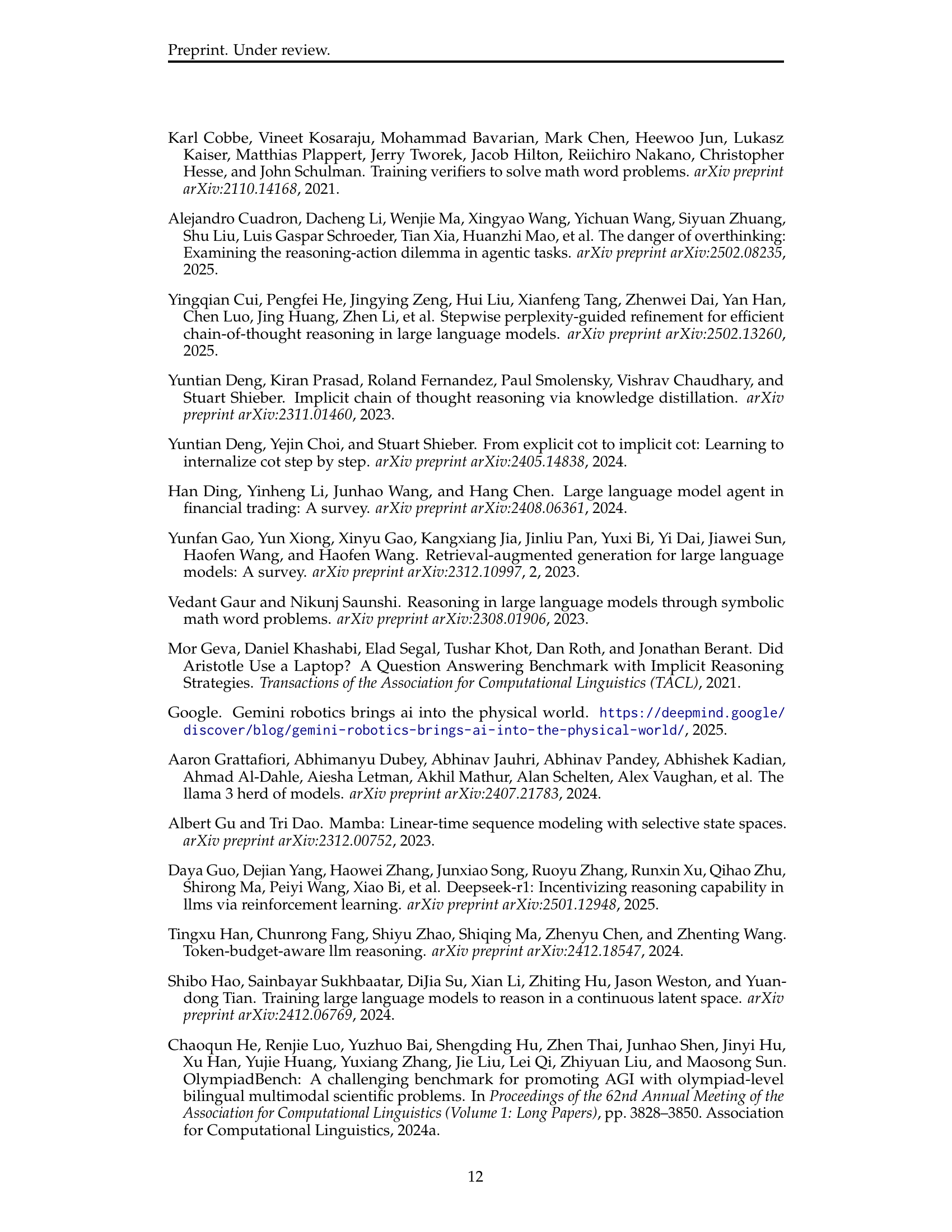

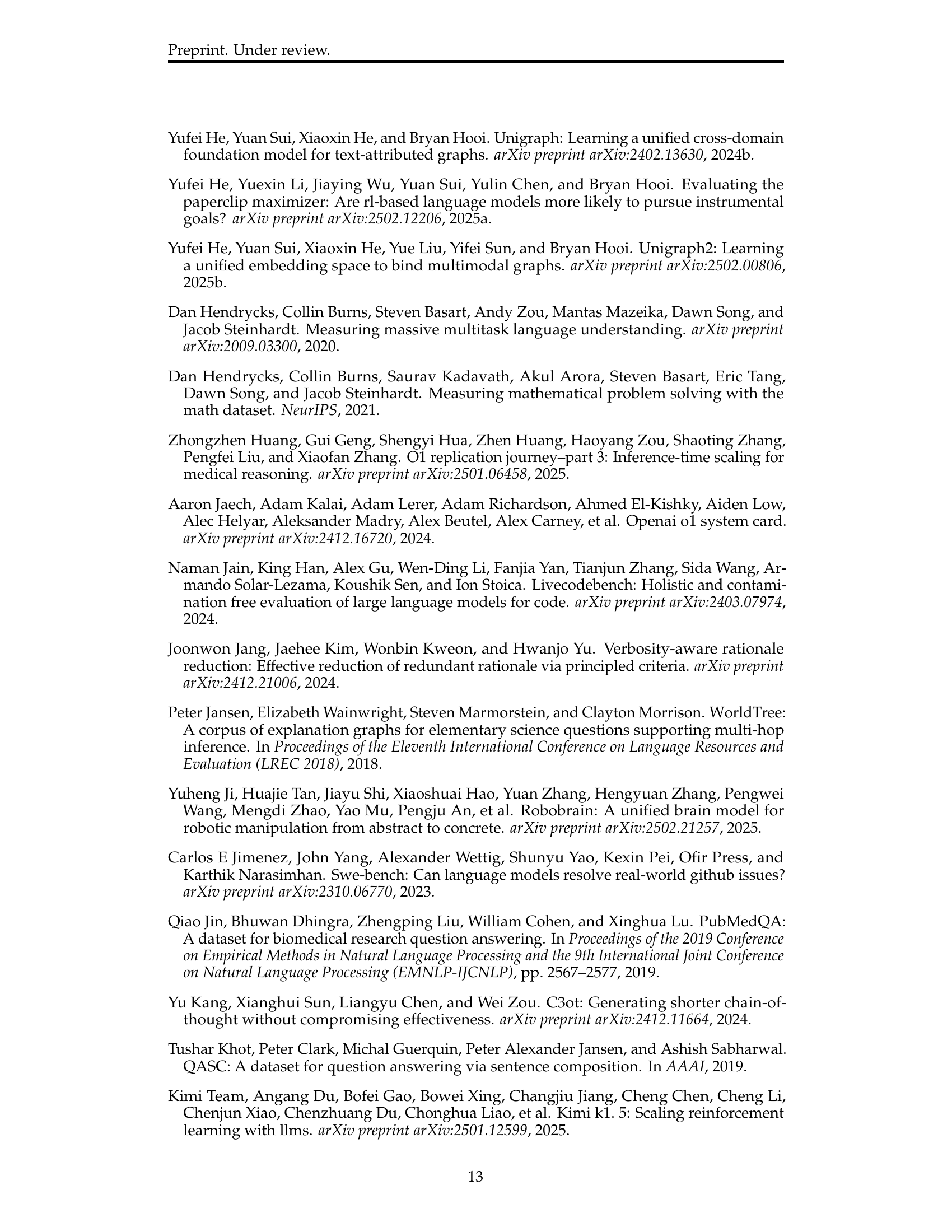

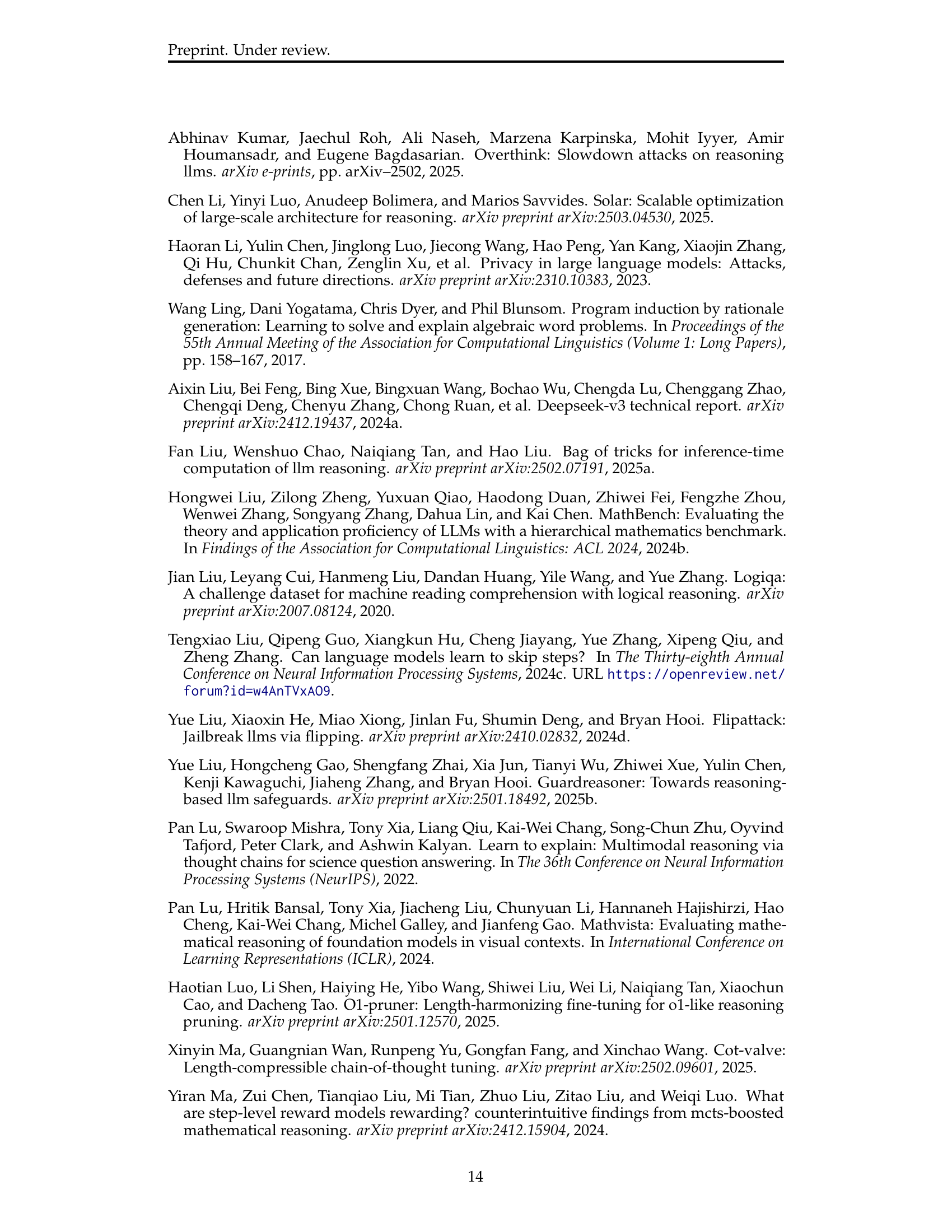

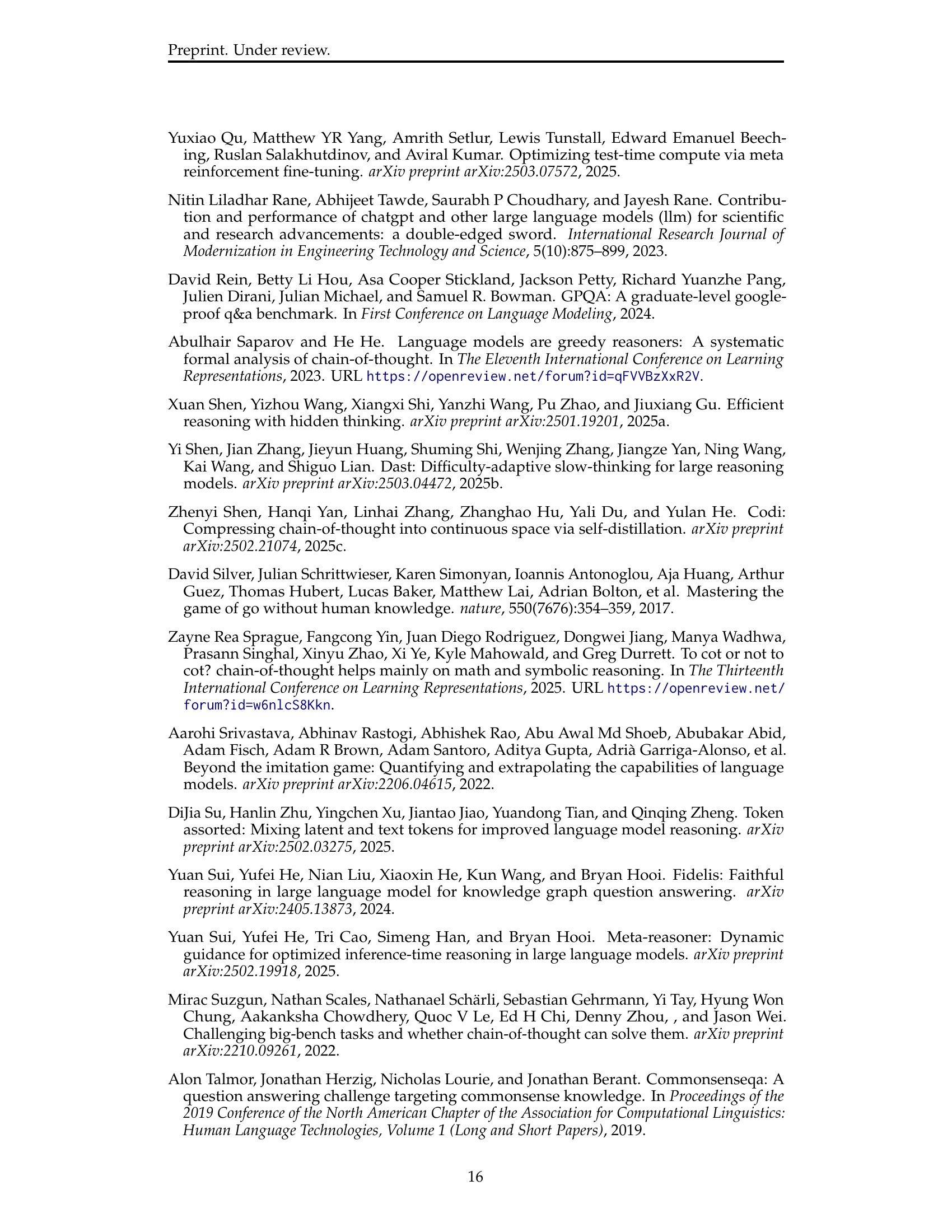

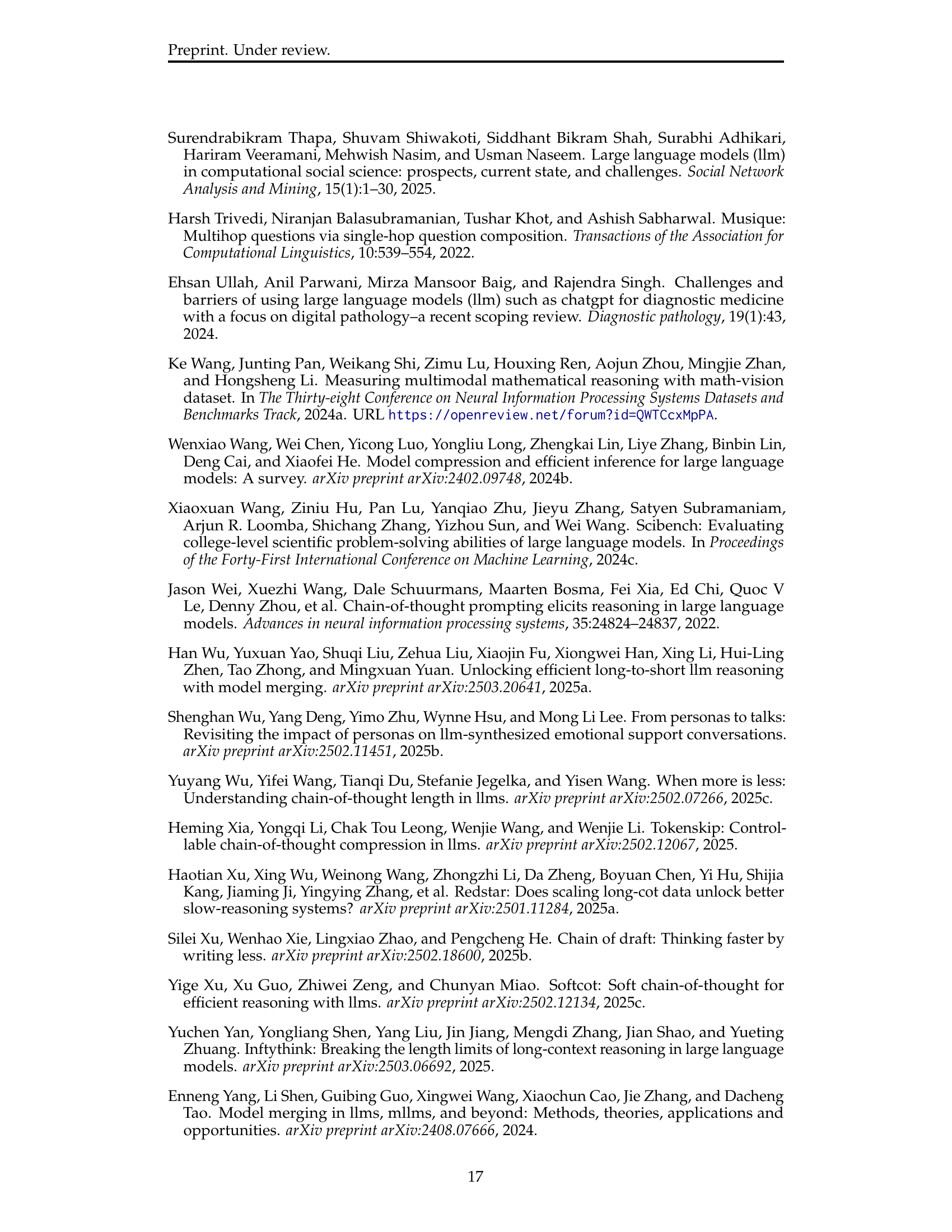

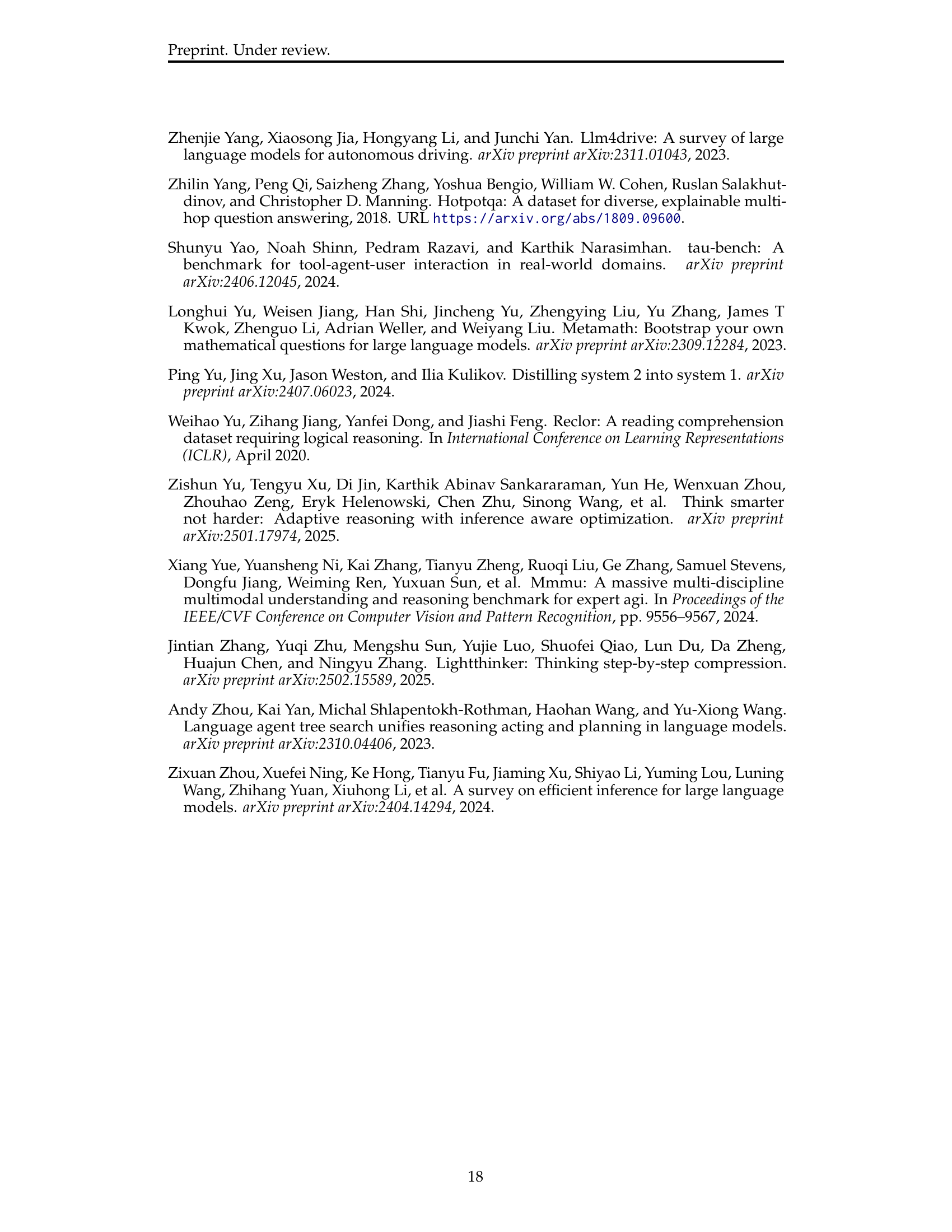

| Types | Methods | Training | Strategy | Model | Application |

| Explicit Compact CoT | SoT (Aytes et al., 2025) | ✗ | Prompt | Qwen-2.5-7B/14B/32B | Math, Commonsense, Logic, Scientific, Medical |

| Constrained-CoT (Nayab et al., 2024) | ✗ | Prompt | LLaMA-2-70B, Falcon-40B | Math | |

| CoD (Xu et al., 2025b) | ✗ | Prompt | GPT-4o, Claude 3.5 Sonnet | Math, Commonsense, Symbolic Reasoning | |

| TALE-EP (Han et al., 2024) | ✗ | Prompt | LLaMA-3.1-8B-Instruct | Math | |

| Meta-Reasoner (Sui et al., 2025) | ✗ | Prompt | GPT-4o, GPT-4o-mini, Gemini-Exp-1206 | Math, Scientific | |

| SOLAR (Li et al., 2025) | ✓ | SFT | Qwen2VL-7B-Instruct | Math | |

| C3oT (Kang et al., 2024) | ✓ | SFT | LLaMA-2-Chat -7B & -13B | Math, Commonsense | |

| TokenSkip (Xia et al., 2025) | ✓ | SFT | LLaMA-3.1-8B-Instruct, Qwen2.5- 14B-Instruct | Math | |

| InftyThink (Yan et al., 2025) | ✓ | SFT | Qwen2.5-14B/32B, Qwen2.5-Math-1.5B/7B, LLaMA-3.1-8B | Math, Scientific | |

| LightThinker (Zhang et al., 2025) | ✓ | SFT | DeepSeek-R1-Distill-Qwen-7B, DeepSeek-R1-Distill-LLaMA-8B | Language Understanding, Math, Scientific, Commonsense, Logic | |

| CoT-Valve (Ma et al., 2025) | ✓ | SFT | QwQ-32B-Preview, DeepSeek-R1-Distill-LLaMA-8B, LLaMA-3.1-8B, LLaMA-3.2-1B, Qwen32B-Instruct | Math | |

| Distill System 2 (Yu et al., 2024) | ✓ | SFT | LLaMA-2-70B-chat | Math, Commonsense, Coin Flip | |

| SF (Munkhbat et al., 2025) | ✓ | SFT | LLaMA-3.2-3B, Gemma2-2B , Qwen2.5-3B , Qwen2.5-Math-1.5B, DeepSeekMath-7B | Math | |

| Skip Steps (Liu et al., 2024c) | ✓ | SFT | LLaMA2-7b, Phi-3-mini | Math, Logic | |

| VARR (Jang et al., 2024) | ✓ | SFT | Mistral 7B, Llama3.2 1B/3B | Math, Commonsense | |

| DAST (Shen et al., 2025b) | ✓ | SimPO | DS-R1-Distill-Qwen-7B, DS-R1-Distill-Qwen-32B | Math | |

| TALE-PT (Han et al., 2024) | ✓ | SFT, DPO | LLaMA-3.1-8B-Instruct | Math | |

| Kimi k1.5 (Kimi Team et al., 2025) | ✓ | RL | Kimi k1.5 | Multimodal Understanding, Math, Code | |

| O1-Pruner (Luo et al., 2025) | ✓ | RL | Marco-o1-tB, QwQ-32B | Math | |

| MRT (Qu et al., 2025) | ✓ | RL | DeepSeek-R1-Distill-Qwen-32B | Math | |

| (Arora & Zanette, 2025) | ✓ | RL | DS-R1-Distill-Qwen-1.5B, DS-R1-Distill-Qwen-7B | Math | |

| Claude 3.7 (Anthropic, 2025) | ✓ | RL | Unknown | Math, Code, Agent | |

| L1 (Aggarwal & Welleck, 2025) | ✓ | RL | Qwen-Distilled-R1-1.5B | Language Understanding, Logic, Math | |

| SPIRIT (Cui et al., 2025) | ✓ | RL | LLaMA3-8B-Instruct, Qwen2.5- 7B-Instruct | Math | |

| IBPO (Yu et al., 2025) | ✓ | RL | LLaMA-3.1-8B | Math | |

| Implicit Latent CoT | ICoT-KD (Deng et al., 2023) | ✓ | SFT | GPT-2 Small/Medium | Math |

| CODI (Shen et al., 2025c) | ✓ | SFT | GPT-2 Small, LLaMA-3.2-1B | Math | |

| ICoT-SI (Deng et al., 2024) | ✓ | SFT | GPT-2 Small/Medium, Phi-3 3.8B, Mistral 7B | Math | |

| COCONUT (Hao et al., 2024) | ✓ | SFT | GPT-2 | Math | |

| CCoT (Cheng & Van Durme, 2024) | ✓ | SFT | LLaMA2-7B-Chat | Math, Logic | |

| Heima (Shen et al., 2025a) | ✓ | SFT | LLaVA-CoT, LLaMA-3.1-8B-Instruct | Multimodal Reasoning | |

| Token assorted (Su et al., 2025) | ✓ | SFT | LLaMA-3.2-1B, LLaMA-3.2-3B, LLaMA-3.1-8B | Agentic Planning, Logic, Math. | |

| SoftCoT (Xu et al., 2025c) | ✓ | SFT | LLaMA-3.1-8B-Instruct, Qwen2.5-7B-Instruct | Math, Commonsense, Symbolic Reasoning |

🔼 This table provides a comprehensive taxonomy of efficient inference methods specifically designed for Large Reasoning Models (LRMs). It categorizes various methods based on their approach to improving inference efficiency (reducing token usage while preserving reasoning quality). The taxonomy distinguishes between explicit compact Chain-of-Thought (CoT) methods, which reduce tokens while maintaining explicit reasoning structure, and implicit latent CoT methods, which encode reasoning steps within hidden representations instead of explicit tokens. For each method, the table lists its type (explicit compact CoT or implicit latent CoT), the specific method name, the training strategy used (e.g., Supervised Fine-Tuning (SFT), Reinforcement Learning (RL)), the model used in the experiment, and the applications to which the method has been applied.

read the caption

Table 1: A taxonomy of efficient inference methods for Large Reasoning Models.

Full paper#