TL;DR#

Current image generation models struggle with rendering visual text, especially in complex scenarios, leading to text distortion, omission, and blurriness. This paper tackles these challenges in Complex Visual Text Generation (CVTG) by introducing TextCrafter, a novel multi-visual text rendering method. TextCrafter employs a progressive strategy to decompose complex visual text into distinct components, ensuring alignment between textual content and its visual carrier. It incorporates a token focus enhancement mechanism to amplify the prominence of visual text.

The authors present the CVTG-2K dataset to evaluate generative models on CVTG tasks. TextCrafter consists of three key stages: instance fusion to strengthen the connection between visual text and its carrier; region insulation to initialize layout information and separate text prompts across regions; and text focus to enhance visual text attention maps. Extensive experiments show that TextCrafter outperforms state-of-the-art approaches.

Key Takeaways#

Why does it matter?#

This paper introduces TextCrafter and the CVTG-2K dataset, offering a new approach to generating accurate and contextually relevant visual text in complex images. It addresses limitations in current models and opens new avenues for research in multi-instance visual generation and text rendering, pushing the boundaries of AI’s ability to integrate text seamlessly into visual content.

Visual Insights#

🔼 Figure 1 showcases TextCrafter’s ability to generate images with multiple text regions, each exhibiting varying lengths, sizes, styles, numbers, and symbols. It directly addresses common issues in visual text generation, such as distortion, blurriness, and omission. The figure provides a visual comparison of TextCrafter’s output against three state-of-the-art models (FLUX, TextDiffuser-2, and 3DIS) across several diverse scenarios, highlighting TextCrafter’s superior accuracy and detail in rendering complex visual text.

read the caption

Figure 1: TextCrafter enables precise multi-region visual text rendering, addressing the challenges of long, small-size,various numbers, symbols and styles in visual text generation. We illustrate the comparisons among TextCrafter with three state-of-the-art models, i.e., FLUX, TextDiffuser-2 and 3DIS.

| Benchmark | Number | Word | Char | Attribute | Region | ||||

|---|---|---|---|---|---|---|---|---|---|

| CreativeBench | 400 | 1.00 | 7.29 | × | × | ||||

| MARIOEval | 5414 | 2.92 | 15.47 | × | × | ||||

| DrawTextExt | 220 | 3.75 | 17.01 | × | × | ||||

| AnyText-benchmark | 1000 | 4.18 | 21.84 | × | × | ||||

| CVTG-2K | 2000 | 8.10 | 39.47 |

|

|

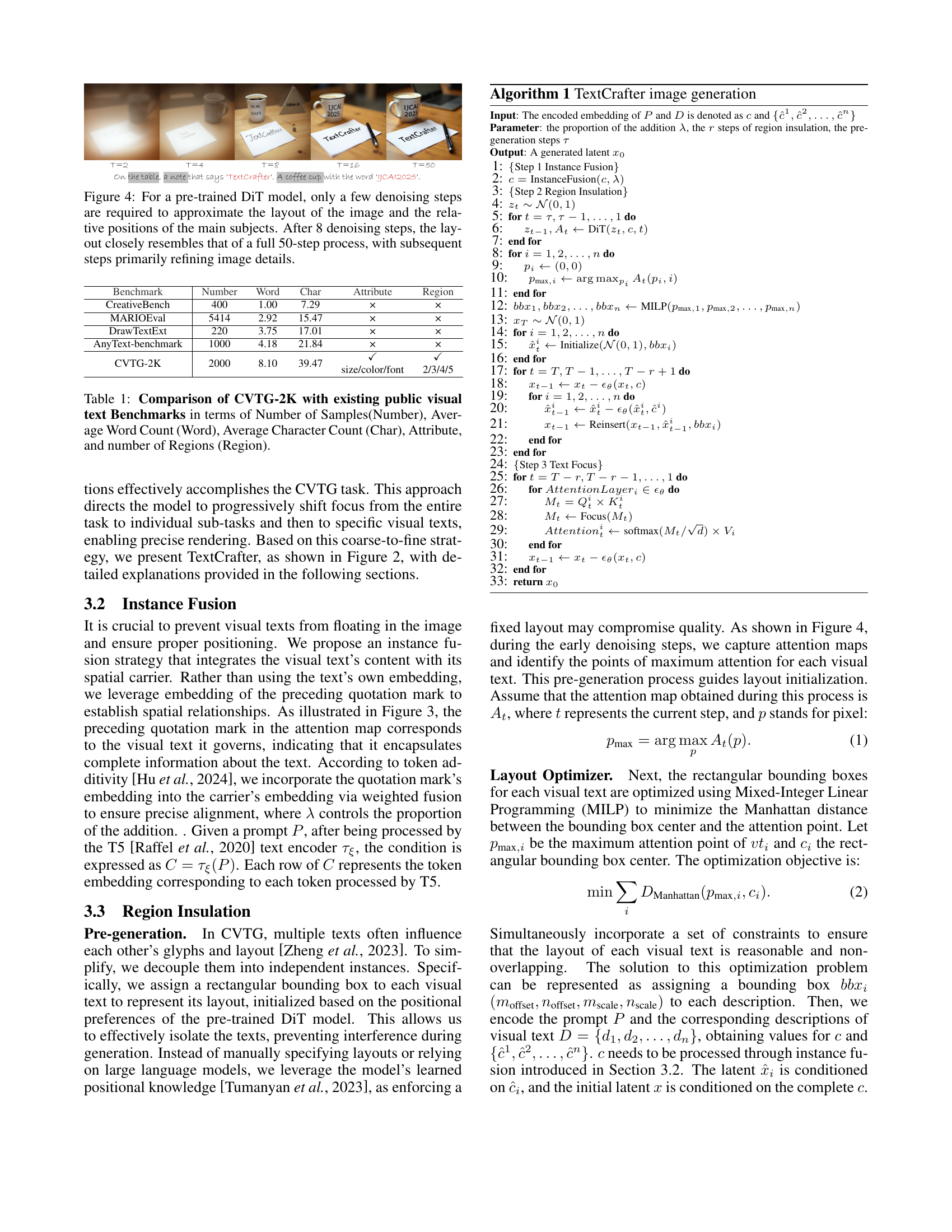

🔼 This table compares the CVTG-2K dataset with other publicly available visual text benchmark datasets. It provides a quantitative comparison across several key features, including the total number of samples, the average number of words and characters per sample, whether attributes (like size, color, or font) are included in the annotations, and the average number of regions containing text within each sample. This comparison highlights the relative size and complexity of CVTG-2K compared to existing benchmarks.

read the caption

Table 1: Comparison of CVTG-2K with existing public visual text Benchmarks in terms of Number of Samples(Number), Average Word Count (Word), Average Character Count (Char), Attribute, and number of Regions (Region).

In-depth insights#

TextCrafter: CVTG#

TextCrafter tackles the Complex Visual Text Generation (CVTG) task by generating intricate textual content across diverse visual regions within images. Image generation models in CVTG often distort, blur, or omit visual text, which TextCrafter addresses with a novel multi-visual text rendering method. Employing a progressive strategy, TextCrafter deconstructs complex visual text into components while aligning content and its visual carrier, using a token focus enhancement to emphasize text during generation. It tackles text confusion, omissions, and blurriness. TextCrafter outperforms current methods and is rigorously evaluated on the CVTG-2K dataset.

Instance Fusion#

Instance Fusion aims to accurately position visual text within an image by integrating text content with its spatial carrier. It leverages the embedding of the preceding quotation mark, which encapsulates complete information about the text, to establish spatial relationships. By incorporating this embedding into the carrier’s embedding via weighted fusion, it ensures precise alignment and prevents text from floating in incorrect positions. This strategy enhances the model’s ability to generate images where visual text is logically and spatially consistent with the surrounding elements, improving the overall coherence and realism of the generated scene. The fusion process is controlled by a parameter λ, which determines the proportion of the quotation mark’s embedding added to the carrier’s embedding, allowing for fine-tuning of the spatial integration.

Region Control#

While the title ‘Region Control’ isn’t explicitly present, the paper introduces a region insulation strategy that implicitly manages different regions of interest where texts appear. Region insulation is key to disentangling complexity in CVTG by separating text instances and preventing glyph or layout interference. The study leveraged the DiT model’s positional priors to initialize layouts and assigned bounding boxes to each visual text, essentially isolating them for individual processing. This approach, in contrast to relying on LLMs or manual layout, harnesses the model’s inherent spatial understanding. The region control ensures text boundaries were well-defined to harmonize the overall image, preventing confusion. Ablation studies revealed its effectiveness in reducing text interference. This form of localized control is vital for precise manipulation and coherency, as the quality of the generated visual texts is greatly improved with the technique.

CVTG-2K dataset#

The paper introduces CVTG-2K, a novel dataset addressing the limitations of existing visual text benchmarks. CVTG-2K tackles complex visual text generation, featuring diverse scenes, multiple text regions (2-5), varied text lengths (avg. 8.10 words, 39.47 characters), and attributes (size, color, font). Unlike fixed-template datasets, CVTG-2K ensures diversity and real-world relevance through prompts generated by OpenAI’s O1-mini API using Chain-of-Thought techniques. The dataset includes fine-grained information, decoupling prompts and specifying carrier-text relationships. Rigorous filtering and manual review ensures quality and avoids harmful content. Public release alongside the code will foster research in complex visual text generation.

Focus on small Text#

Focusing on small text within complex visual scenes presents a significant challenge in text generation. Accurately rendering small text requires high fidelity to avoid blurriness and maintain legibility, which is crucial for conveying information effectively. The model needs to allocate sufficient attention to these regions, ensuring that the details are preserved during the generation process. Techniques such as attention control mechanisms and token focus enhancement can be employed to amplify the prominence of small text. This involves refining the attention maps to highlight textual elements and re-weighting the relevant features. Addressing the small-text challenge is important for enhancing the overall quality and informativeness of generated images, particularly in scenarios where textual information is a key component.

More visual insights#

More on figures

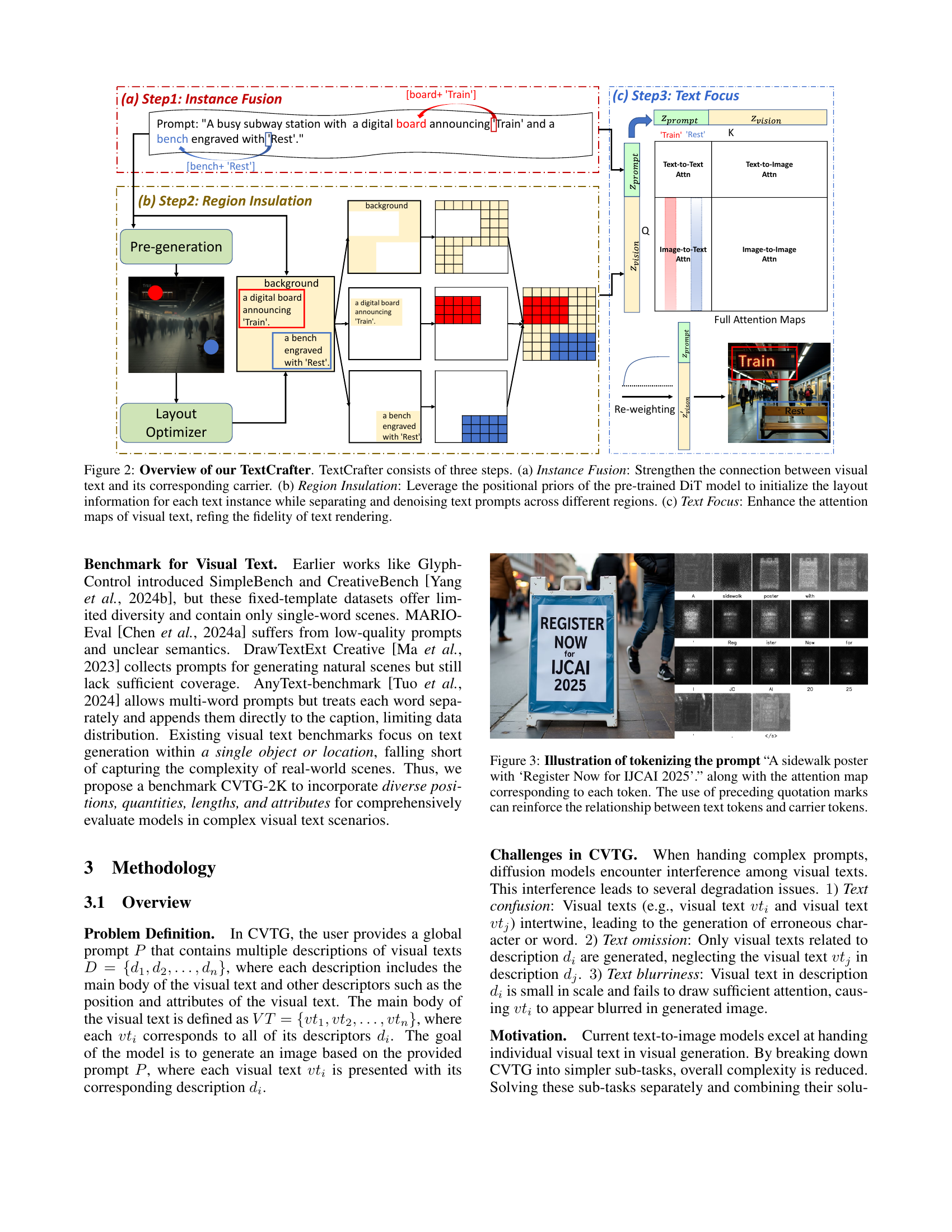

🔼 TextCrafter, a novel framework for complex visual text generation, is illustrated in this figure. It consists of three main steps: 1) Instance Fusion: This step ensures precise alignment between textual content and its visual carrier by integrating the embedding of the preceding quotation mark into the carrier’s embedding. This step strengthens the connection between the visual text and its surrounding environment, preventing the text from appearing in incorrect positions. 2) Region Insulation: This step leverages the positional priors of the pre-trained DiT model to initialize the layout information for each text instance while separating and denoising text prompts across different regions. It prevents early interference between text areas, thereby reducing confusion and the risk of content omission in multi-text scenarios. 3) Text Focus: This step enhances the attention maps of visual text, improving the fidelity of text rendering and addressing blurriness, especially in smaller text. This is achieved by introducing an attention control mechanism to amplify the prominence of visual text during the generation process.

read the caption

Figure 2: Overview of our TextCrafter. TextCrafter consists of three steps. (a) Instance Fusion: Strengthen the connection between visual text and its corresponding carrier. (b) Region Insulation: Leverage the positional priors of the pre-trained DiT model to initialize the layout information for each text instance while separating and denoising text prompts across different regions. (c) Text Focus: Enhance the attention maps of visual text, refing the fidelity of text rendering.

🔼 Figure 3 visualizes the process of tokenizing a complex prompt for visual text generation. The prompt, ‘A sidewalk poster with ‘Register Now for IJCAI 2025’’, is broken down into individual tokens, including punctuation. The image displays the attention map associated with each token, highlighting how the model focuses on different parts of the prompt. Notably, the figure shows how the inclusion of quotation marks around ‘Register Now for IJCAI 2025’ strengthens the association between the text phrase and its visual representation (the poster). This demonstrates a key aspect of the proposed TextCrafter model, which uses such techniques to improve the accuracy of complex visual text generation.

read the caption

Figure 3: Illustration of tokenizing the prompt “A sidewalk poster with ‘Register Now for IJCAI 2025’.” along with the attention map corresponding to each token. The use of preceding quotation marks can reinforce the relationship between text tokens and carrier tokens.

🔼 This figure demonstrates the efficiency of the TextCrafter model’s pre-generation phase. It shows that using a pre-trained Diffusion-based Image Transformer (DiT) model, a good approximation of the final image layout and the relative positions of key objects can be achieved with far fewer denoising steps than a full generation. The comparison highlights that after only 8 denoising steps, the generated layout is already very similar to the layout produced after 50 steps. The remaining steps primarily focus on refining the details and visual quality of the image, rather than establishing the overall structure.

read the caption

Figure 4: For a pre-trained DiT model, only a few denoising steps are required to approximate the layout of the image and the relative positions of the main subjects. After 8 denoising steps, the layout closely resembles that of a full 50-step process, with subsequent steps primarily refining image details.

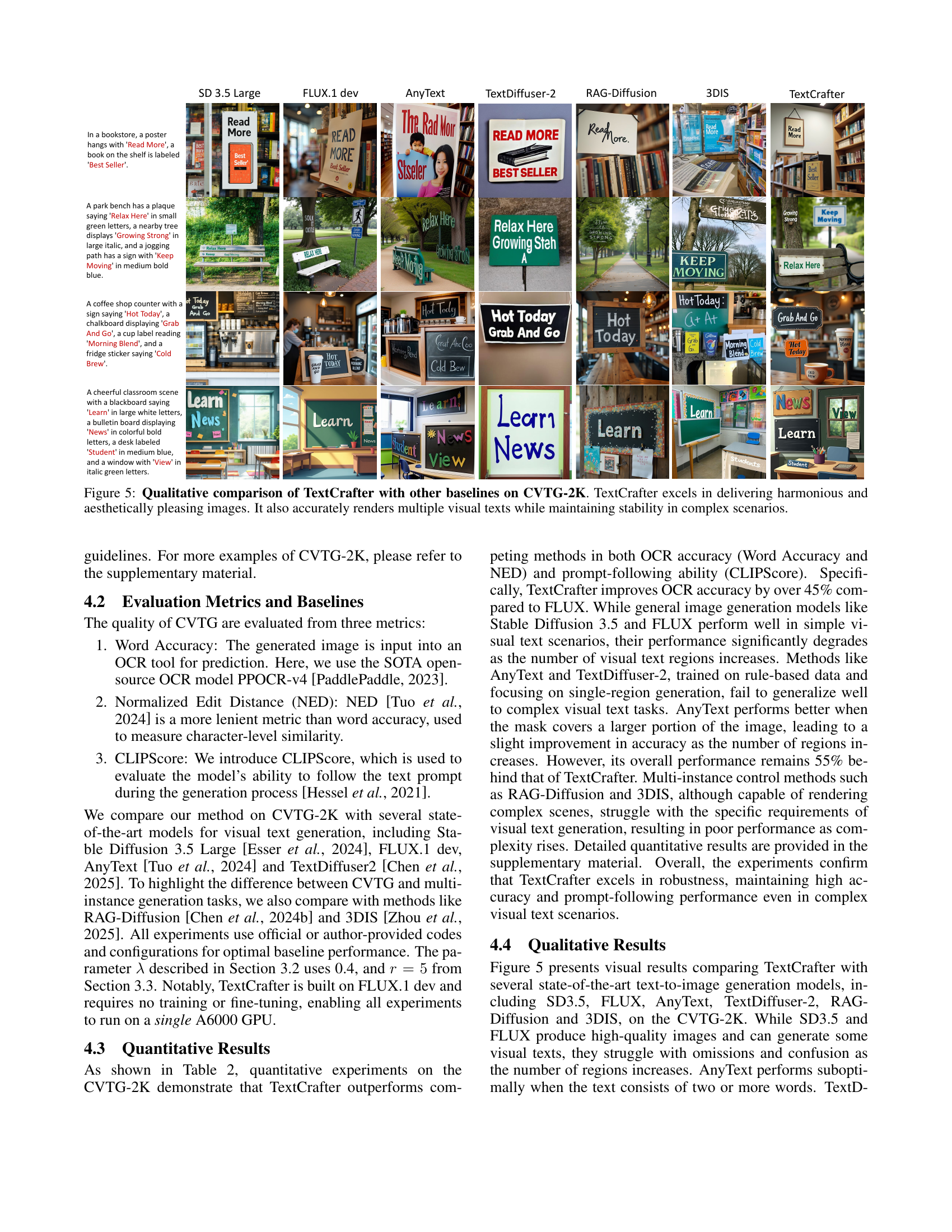

🔼 Figure 5 presents a qualitative comparison of TextCrafter’s performance against several other state-of-the-art baselines on the CVTG-2K benchmark dataset. The figure showcases example outputs from each model, highlighting TextCrafter’s ability to generate visually harmonious and aesthetically pleasing images with multiple text instances. In contrast to the baseline methods, which often struggle with text distortion, omissions, and blurring, particularly in complex scenarios, TextCrafter consistently renders multiple texts accurately and maintains stability across diverse visual text layouts.

read the caption

Figure 5: Qualitative comparison of TextCrafter with other baselines on CVTG-2K. TextCrafter excels in delivering harmonious and aesthetically pleasing images. It also accurately renders multiple visual texts while maintaining stability in complex scenarios.

More on tables

| size/color/font |

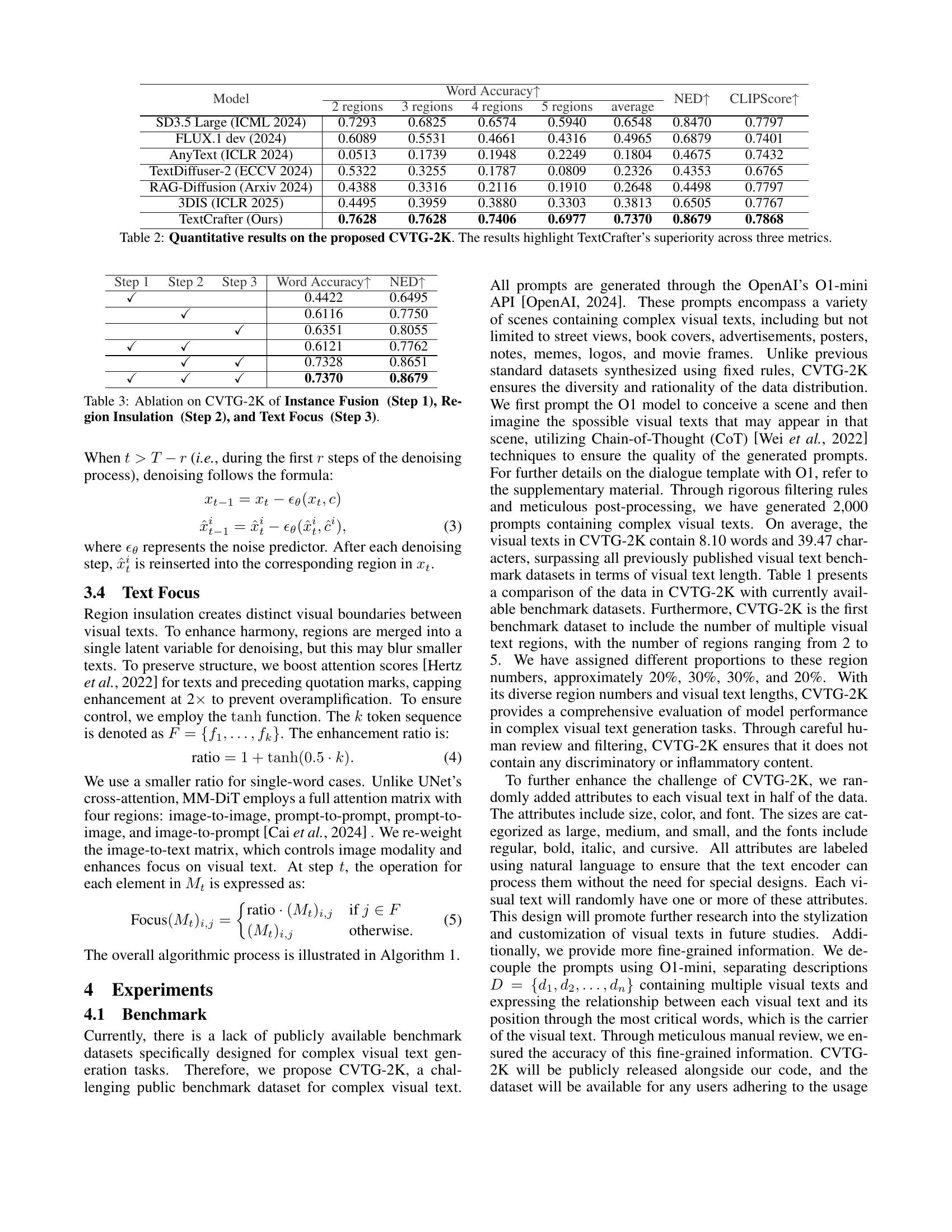

🔼 This table presents a quantitative comparison of TextCrafter against several state-of-the-art models on the CVTG-2K benchmark dataset. The benchmark evaluates the performance of complex visual text generation models. Three metrics are used to assess the models: Word Accuracy (measuring the accuracy of generated text), Normalized Edit Distance (NED, a more lenient metric that considers character-level similarity), and CLIPScore (an assessment of how well the generated image aligns with the given text prompt). The results demonstrate TextCrafter’s superior performance across all three metrics, highlighting its ability to accurately generate complex visual text scenes.

read the caption

Table 2: Quantitative results on the proposed CVTG-2K. The results highlight TextCrafter’s superiority across three metrics.

| 2/3/4/5 |

🔼 This table presents the ablation study results on the CVTG-2K benchmark dataset. It shows the impact of each step in the TextCrafter model: Instance Fusion (Step 1), Region Insulation (Step 2), and Text Focus (Step 3) on the Word Accuracy and NED metrics. The results demonstrate the individual and combined contributions of each step to the overall performance of the model. It quantifies how each component of the proposed TextCrafter method impacts the final results.

read the caption

Table 3: Ablation on CVTG-2K of Instance Fusion (Step 1), Region Insulation (Step 2), and Text Focus (Step 3).

Full paper#