TL;DR#

Unified multimodal models face quadratic complexity & data dependence issues. Existing models are slow and impractical for real-time use due to computational demands. OmniMamba uses Mamba-2’s efficiency, extending it from text to multimodal generation via next-token prediction, reducing reliance on extensive training data and improves both training and inference.

OmniMamba employs decoupled vocabularies for modality-specific generation, task-specific LoRA for adaptation, and a two-stage training strategy to balance data. It achieves comparable or superior performance with significantly reduced training data (2M image-text pairs) compared to existing models. Results show 119.2x speedup & 63% memory reduction for long sequences.

Key Takeaways#

Why does it matter?#

This paper introduces a new model overcoming the quadratic computational complexity & large-scale data dependency of current multimodal models, paving the way for more efficient & accessible research and applications.

Visual Insights#

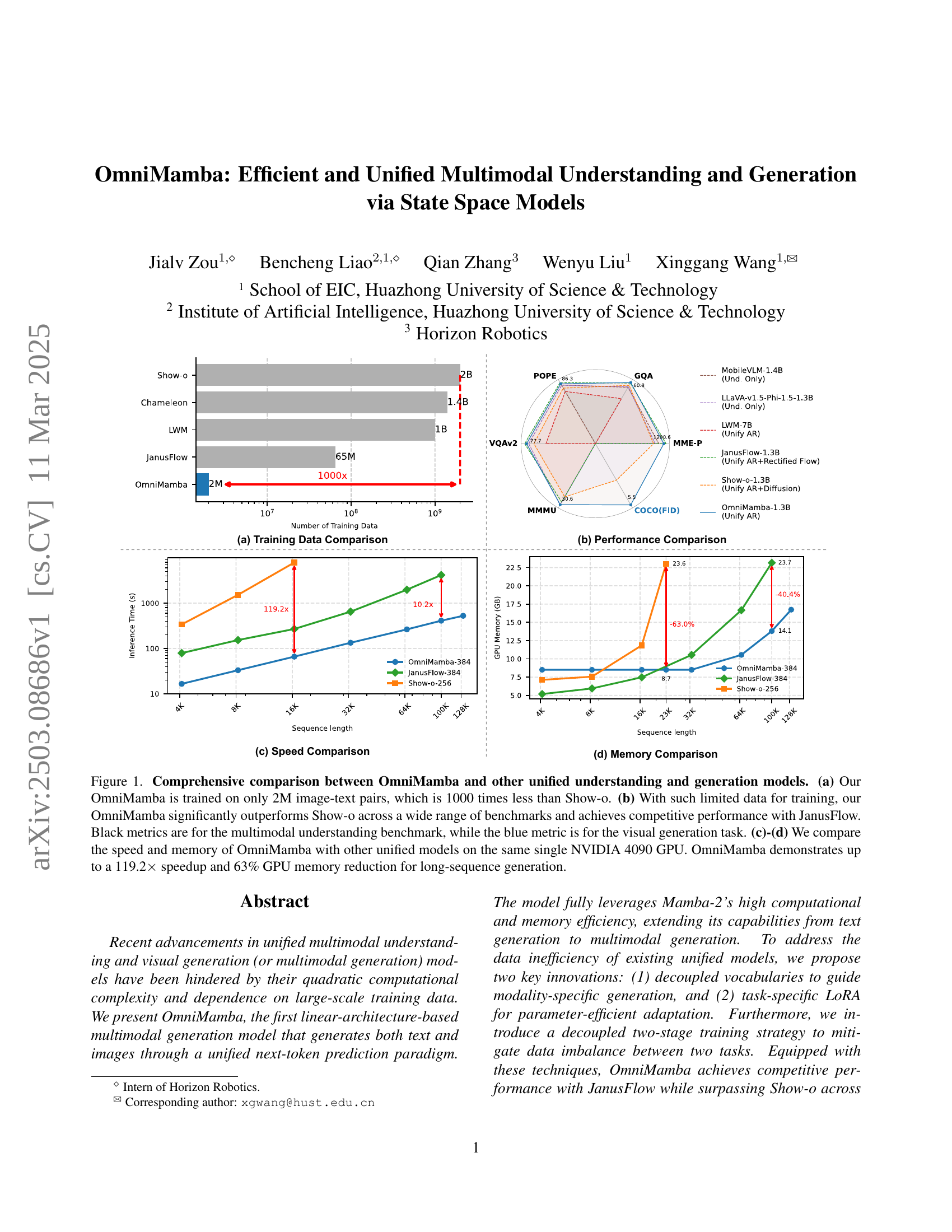

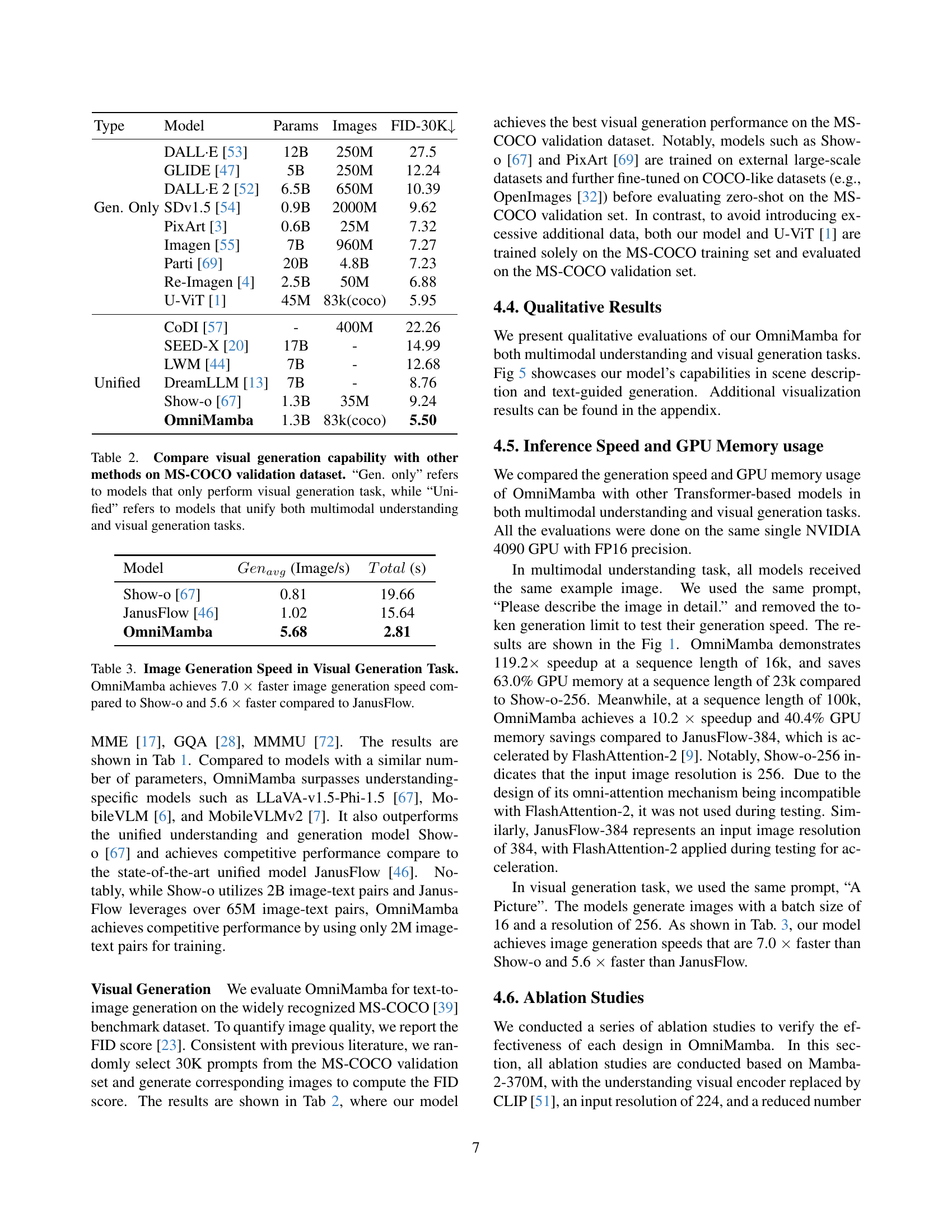

🔼 Figure 1 presents a comprehensive comparison of OmniMamba against other state-of-the-art unified multimodal understanding and generation models. Panel (a) highlights OmniMamba’s data efficiency, trained on a mere 2 million image-text pairs—1000 times less data than Show-o. Panel (b) showcases OmniMamba’s superior performance on various benchmarks, surpassing Show-o and achieving comparable results to JanusFlow despite its significantly smaller training dataset. Black metrics represent multimodal understanding, while blue represents visual generation tasks. Panels (c) and (d) demonstrate OmniMamba’s speed and memory efficiency on a single NVIDIA A40 GPU, achieving up to a 119.2x speedup and a 63% memory reduction during long-sequence generation.

read the caption

Figure 1: Comprehensive comparison between OmniMamba and other unified understanding and generation models. (a) Our OmniMamba is trained on only 2M image-text pairs, which is 1000 times less than Show-o. (b) With such limited data for training, our OmniMamba significantly outperforms Show-o across a wide range of benchmarks and achieves competitive performance with JanusFlow. Black metrics are for the multimodal understanding benchmark, while the blue metric is for the visual generation task. (c)-(d) We compare the speed and memory of OmniMamba with other unified models on the same single NVIDIA 4090 GPU. OmniMamba demonstrates up to a 119.2×\times× speedup and 63% GPU memory reduction for long-sequence generation.

| Type | Model | LLM Params | Res. | POPE | MME-P | VQAv2test | GQA | MMMU |

|---|---|---|---|---|---|---|---|---|

| Und. Only | LLaVA-Phi [79] | Phi-2-2.7B | 336 | 85.0 | 1335.1 | 71.4 | - | - |

| LLaVA [43] | Vicuna-7B | 224 | 76.3 | 809.6 | - | - | - | |

| Emu3-Chat [64] | 8B from scratch | 512 | 85.2 | - | 75.1 | 60.3 | 31.6 | |

| LLaVA-v1.5 [42] | Vicuna-13B | 448 | 86.3 | 1500.1 | 81.8 | 64.7 | - | |

| InstructBLIP [8] | Vicuna-13B | 224 | 78.9 | 1212.8 | - | 49.5 | - | |

| \cdashline2-8 | MobileVLM [6] | MobileLLaMA-1.4B | 336 | 84.5 | 1196.2 | - | 56.1 | - |

| MobileVLM-V2 [7] | MobileLLaMA-1.4B | 336 | 84.3 | 1302.8 | - | 59.3 | - | |

| LLaVA-v1.5-Phi-1.5 [67] | Phi-1.5-1.3B | 336 | 84.1 | 1128.0 | 75.3 | 56.5 | 30.7 | |

| Unified | LWM [44] | LLaMA2-7B | 256 | 75.2 | - | 55.8 | 44.8 | - |

| Chameleon [58] | 7B from scratch | 512 | - | - | - | - | 22.4 | |

| LaVIT [29] | 7B from scratch | 256 | - | - | 66.0 | 46.8 | - | |

| Emu3 [64] | 8B from scratch | 512 | 85.2 | 1243.8 | 75.1 | 60.3 | 31.6 | |

| \cdashline2-8 | Janus [66] | DeepSeek-LLM-1.3B | 384 | 87.0 | 1338.0 | 77.3 | 59.1 | 30.5 |

| JanusFlow [46] | DeepSeek-LLM-1.3B | 384 | 88.0 | 1333.1 | 79.8 | 60.3 | 29.3 | |

| Show-o [67] | Phi-1.5-1.3B | 512 | 80.0 | 1097.2 | 69.4 | 58.0 | 26.7 | |

| OmniMamba | Mamba-2-1.3B | 384 | 86.3 | 1290.6 | 77.7 | 60.8 | 30.6 |

🔼 Table 1 compares the performance of OmniMamba with other multimodal understanding models, categorized into those focusing solely on understanding and those integrating understanding with generation. It assesses performance across several benchmarks (POPE, MME-P, VQAv2test, GQA, and MMMU). Models with a similar parameter count to OmniMamba are highlighted for easier comparison. The table primarily showcases OmniMamba’s performance relative to other models with different architectural designs and training data scales in the context of multimodal understanding tasks.

read the caption

Table 1: Comparison with other methods on multimodal understanding benchmarks. “Und. only” refers to models that only perform multimodal understanding task, while “Unified” refers to models that unify both multimodal understanding and visual generation tasks. Models with a similar number of parameters to ours are highlighted in light blue for emphasis.

In-depth insights#

Mamba Efficiency#

Mamba’s efficiency stems from its innovative selective state space model (SSM) architecture, achieving linear computational complexity, a significant leap from the quadratic complexity of Transformers. This allows Mamba to process long sequences with significantly reduced computational cost and memory footprint. The selective mechanism enables dynamic adaptation to relevant information within the sequence, filtering out noise and irrelevant data, further boosting efficiency. Additionally, Mamba’s hardware-aware design optimizes memory access patterns and parallelization, resulting in faster inference speeds and improved throughput. By addressing the limitations of Transformers, Mamba offers a promising avenue for efficient processing of sequential data in various applications.

Unified Gen Models#

Unified generation models represent a significant leap in AI, merging various generative tasks like image and text creation into a single framework. This approach contrasts with traditional methods that treat each task separately. The core benefit lies in shared learning, where insights from one modality enhance the others, leading to more robust and efficient models. However, challenges remain in balancing the complexities of diverse data types and ensuring high-quality output across all modalities. The future of AI likely hinges on these unified models, promising more versatile and capable systems.

Decoupled Vocabs#

Decoupled Vocabularies represent a strategic design choice in multimodal models. Instead of a unified vocabulary for all modalities, a separate vocabulary is created for each modality (e.g., text and images). This approach offers several key advantages such as more efficient training as the model doesn’t need to learn modality distinctions from scratch; the vocabulary enforces a prior that guides generation, and reduces ambiguity and ensures relevant output for each modality. This strategy improves results, and the design ensures the correct output based on the modality.

LoRA Adaptation#

LoRA (Low-Rank Adaptation) is a parameter-efficient fine-tuning technique. It freezes the pre-trained model weights and introduces a small number of trainable parameters through low-rank decomposition matrices. This drastically reduces the computational cost and memory footprint. LoRA can be selectively applied to specific layers or modules, allowing for targeted adaptation to new tasks or domains. The lightweight nature of LoRA enables rapid experimentation and deployment. It can be used to adapt multimodal models without retraining the entire model, significantly speeding up the process. The technique facilitates task-specific adaptation within unified models, improving efficiency. The low overhead makes it suitable for resource-constrained environments.

Limited Data Use#

Limited data use is a critical consideration in modern machine learning. Training sophisticated models often requires vast datasets, raising concerns about privacy, computational cost, and generalization ability. Using smaller, more focused datasets can offer several advantages, such as: reducing the risk of overfitting, making model development more accessible to researchers with limited resources, and enabling faster training times. Efficient data utilization techniques like transfer learning, data augmentation, and semi-supervised learning can significantly improve the performance of models trained on limited data. The exploration of novel architectures optimized for low-data regimes is a promising area of research, potentially leading to more robust and generalizable AI systems with lower environmental impact.

More visual insights#

More on figures

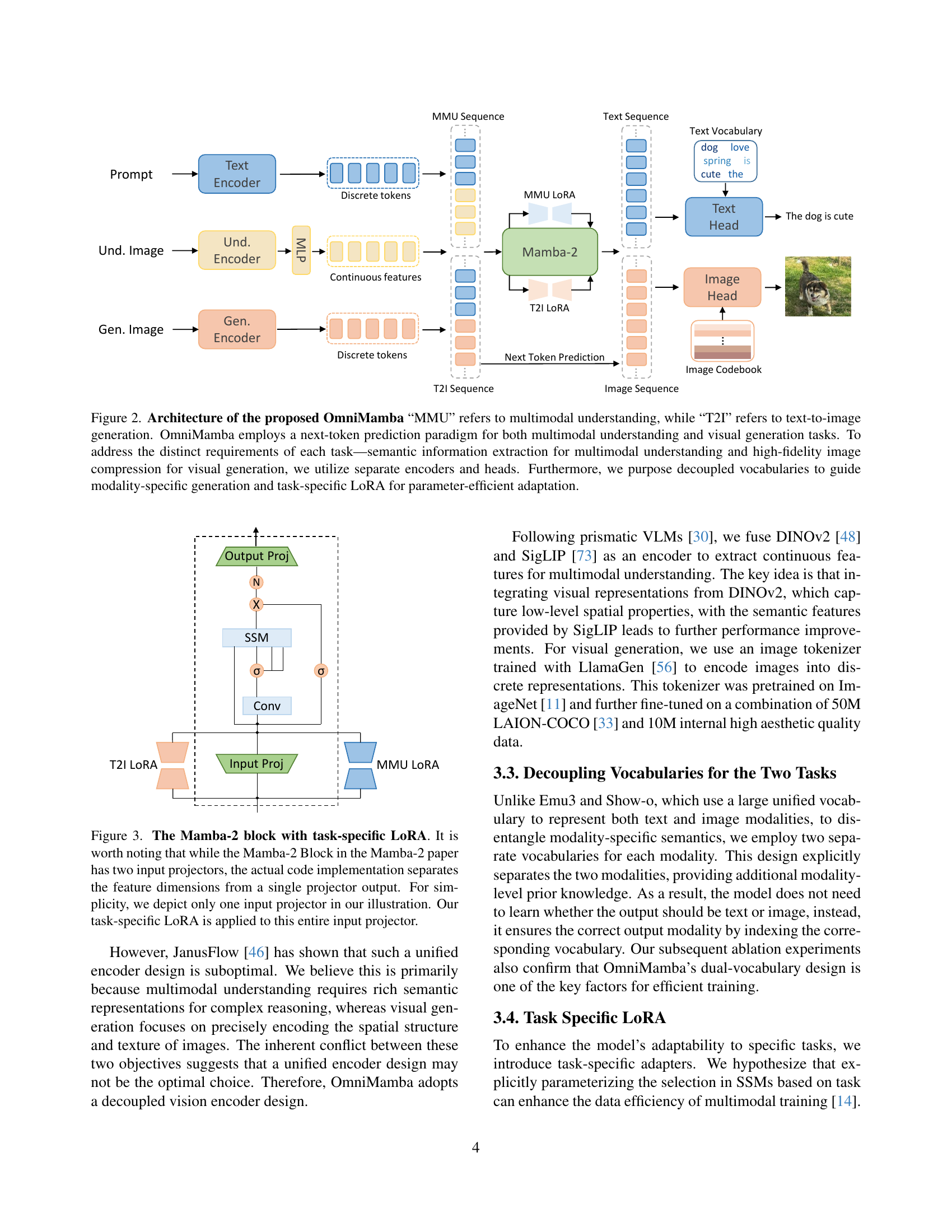

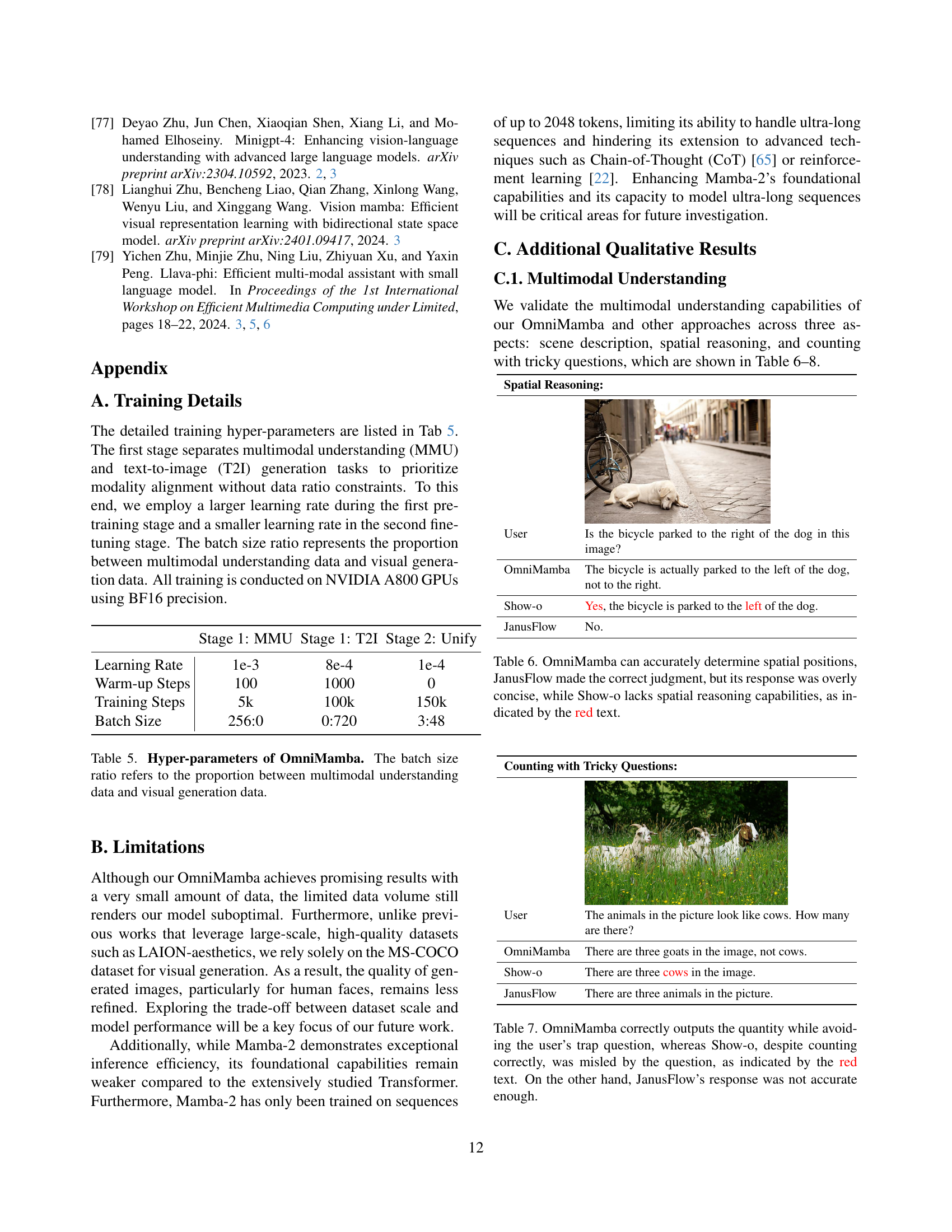

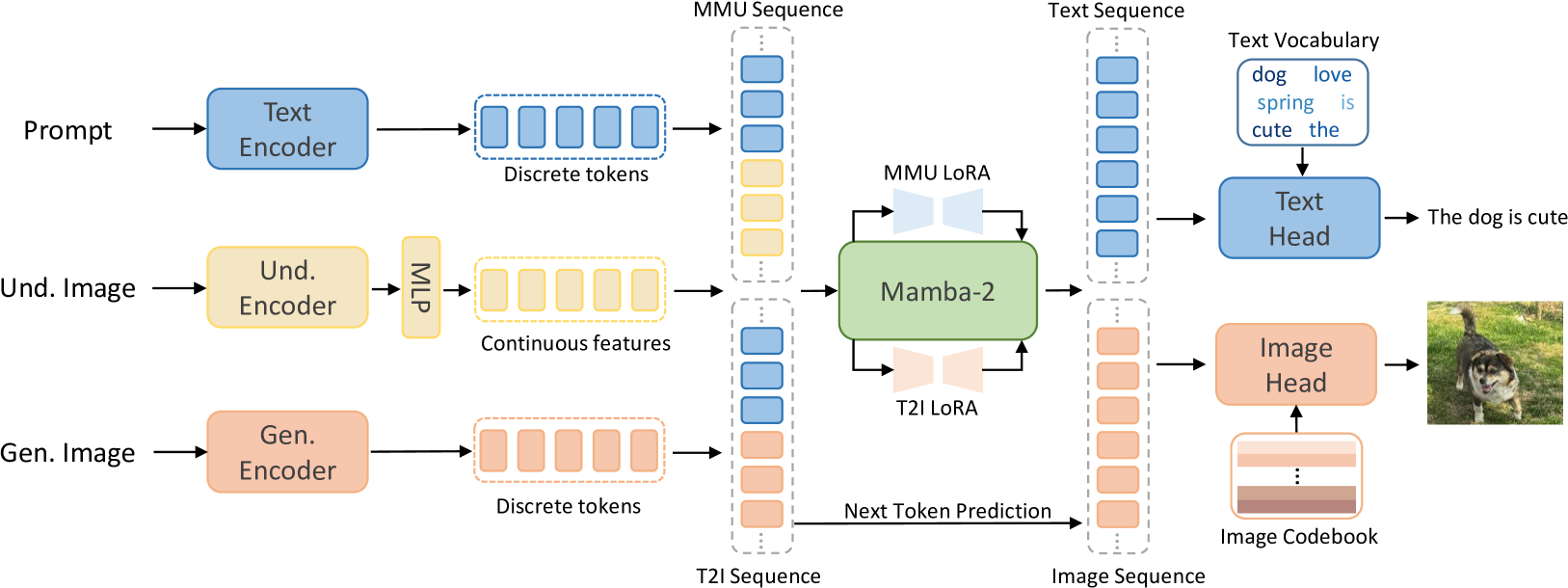

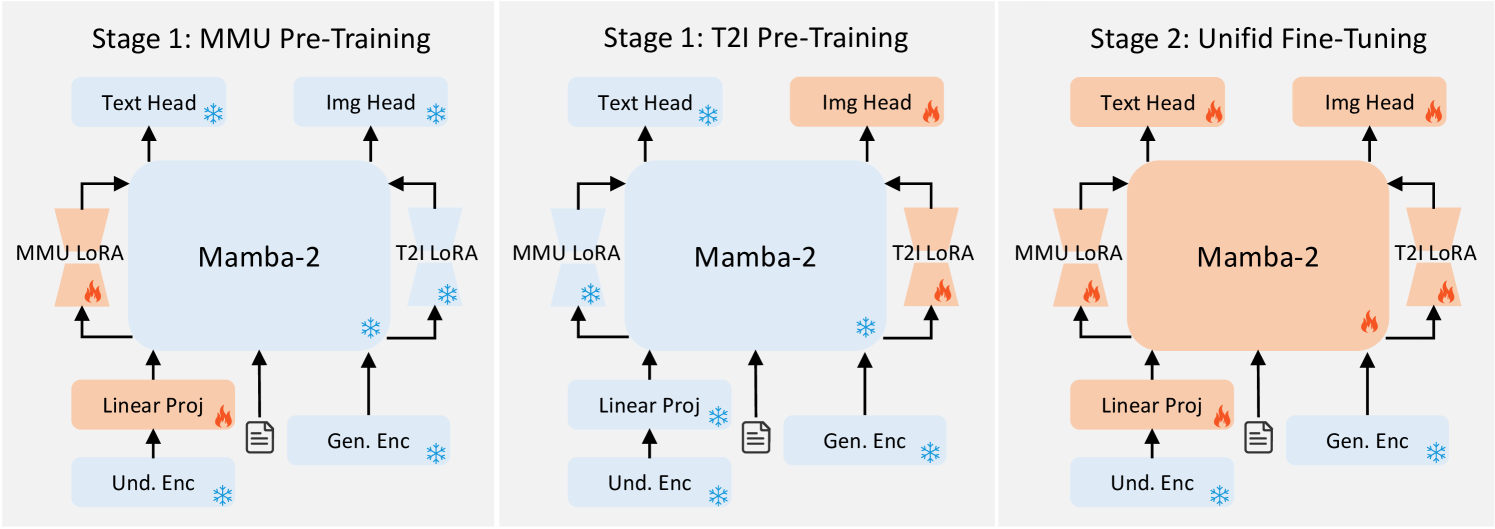

🔼 OmniMamba processes text and image data using a unified next-token prediction approach. Separate encoders and heads are used for multimodal understanding (MMU) and text-to-image (T2I) generation to handle their unique requirements: semantic extraction for MMU and high-fidelity image compression for T2I. Decoupled vocabularies guide modality-specific generation, and task-specific Low-Rank Adaptation (LoRA) enhances parameter efficiency.

read the caption

Figure 2: Architecture of the proposed OmniMamba “MMU” refers to multimodal understanding, while “T2I” refers to text-to-image generation. OmniMamba employs a next-token prediction paradigm for both multimodal understanding and visual generation tasks. To address the distinct requirements of each task—semantic information extraction for multimodal understanding and high-fidelity image compression for visual generation, we utilize separate encoders and heads. Furthermore, we purpose decoupled vocabularies to guide modality-specific generation and task-specific LoRA for parameter-efficient adaptation.

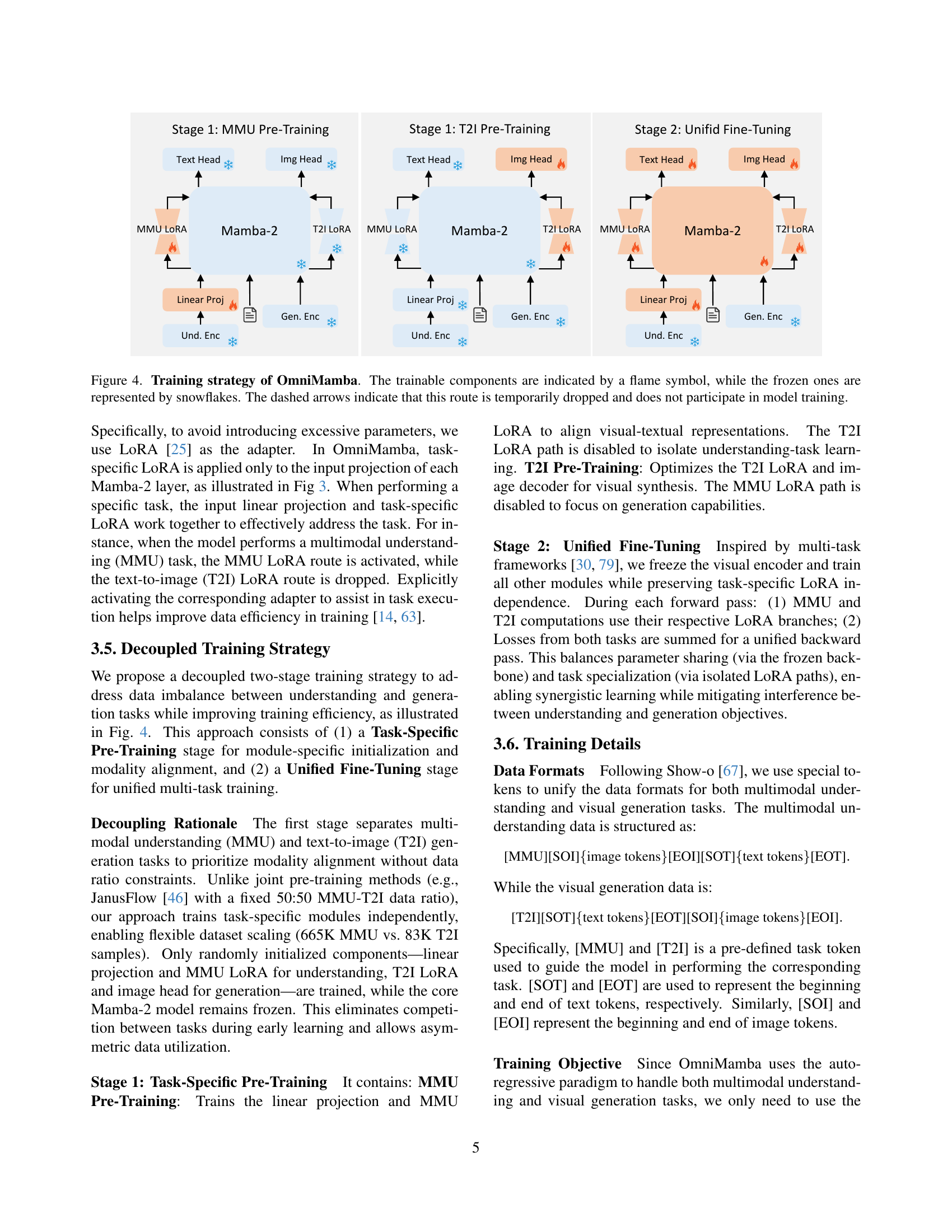

🔼 This figure illustrates a Mamba-2 block, a core component of the OmniMamba model, enhanced with task-specific Low-Rank Adaptation (LoRA). The original Mamba-2 architecture uses two input projectors, but the actual implementation merges feature dimensions from a single projector. This figure simplifies the diagram by showing only one input projector for clarity. Crucially, the task-specific LoRA modules are applied to this entire input projection, enabling efficient task-specific adjustments within the Mamba-2 block. This is key to the model’s ability to handle multimodal understanding and visual generation tasks efficiently.

read the caption

Figure 3: The Mamba-2 block with task-specific LoRA. It is worth noting that while the Mamba-2 Block in the Mamba-2 paper has two input projectors, the actual code implementation separates the feature dimensions from a single projector output. For simplicity, we depict only one input projector in our illustration. Our task-specific LoRA is applied to this entire input projector.

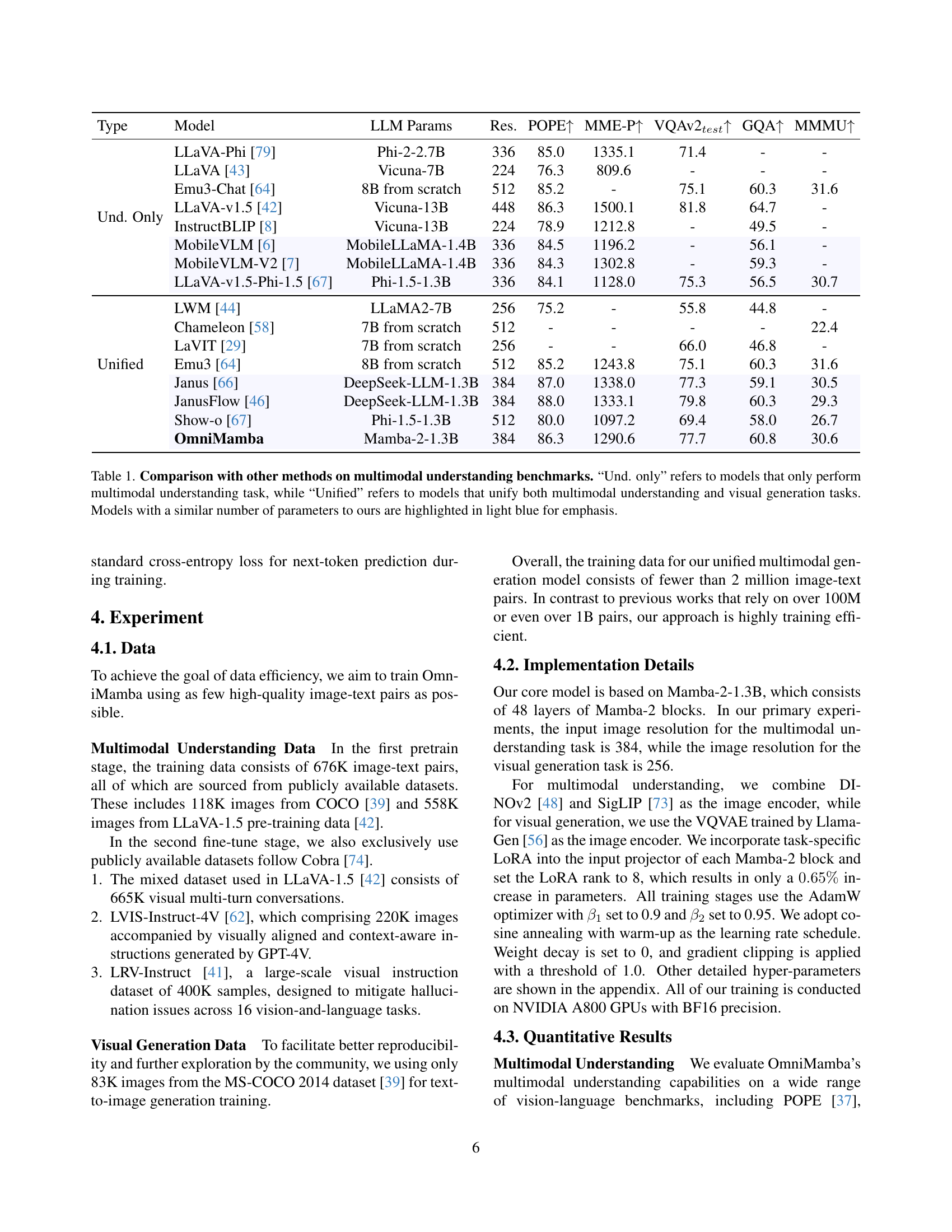

🔼 This figure details OmniMamba’s three-stage training process. Stage 1 involves separate pre-training for multimodal understanding (MMU) and text-to-image (T2I) generation. MMU pre-training focuses on aligning visual and textual representations, while T2I pre-training optimizes visual generation. Note that only specific components (indicated by flame symbols) are trained in this stage, while others (snowflakes) remain frozen to facilitate efficient learning. In Stage 2, unified fine-tuning integrates both MMU and T2I tasks, enabling synergistic learning. The dashed arrows represent the temporary disabling of certain paths during specific tasks. Stage 3 is a continuation of Stage 2, further refining model capabilities across both modalities. The figure uses visual cues such as flames and snowflakes to effectively communicate which parts of the model are being updated during each training phase.

read the caption

Figure 4: Training strategy of OmniMamba. The trainable components are indicated by a flame symbol, while the frozen ones are represented by snowflakes. The dashed arrows indicate that this route is temporarily dropped and does not participate in model training.

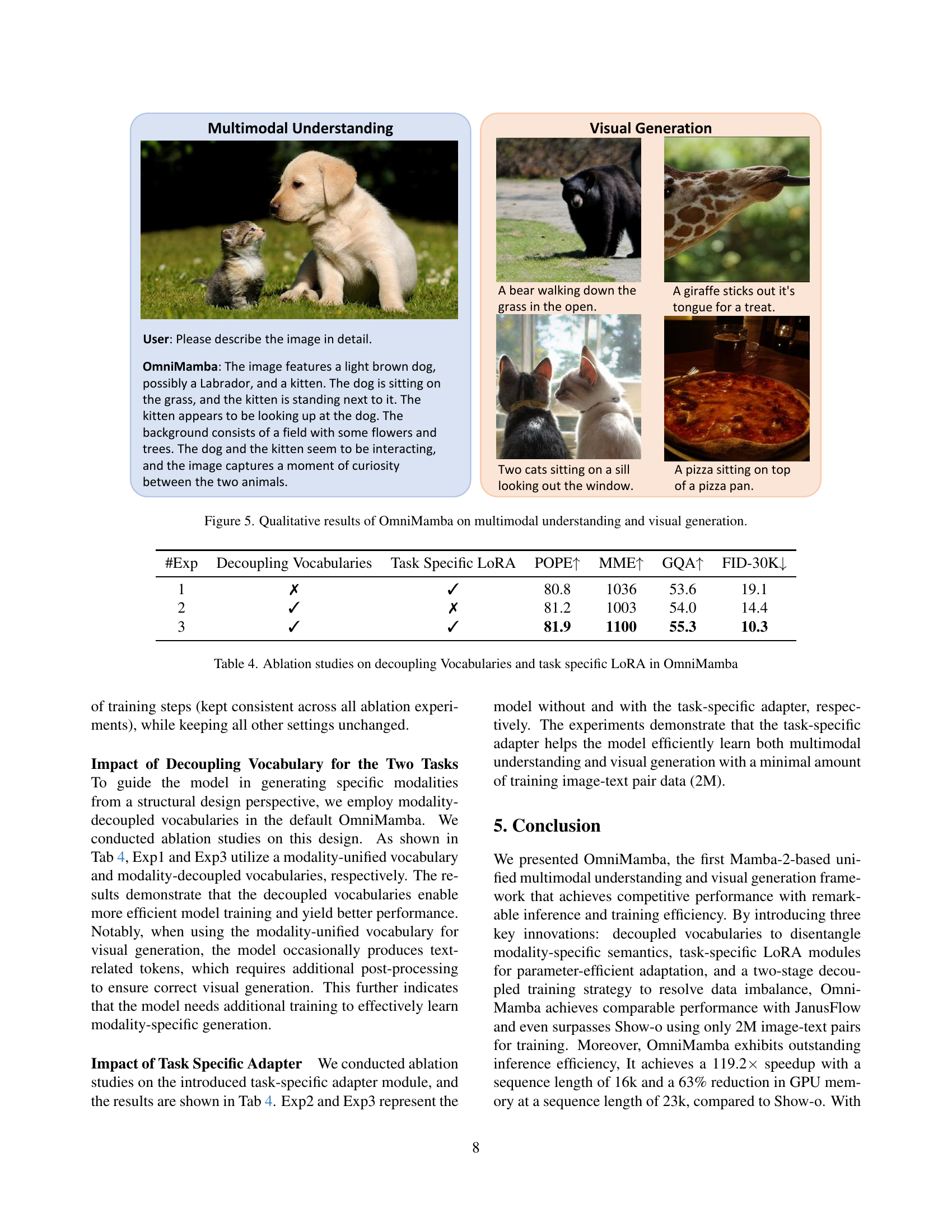

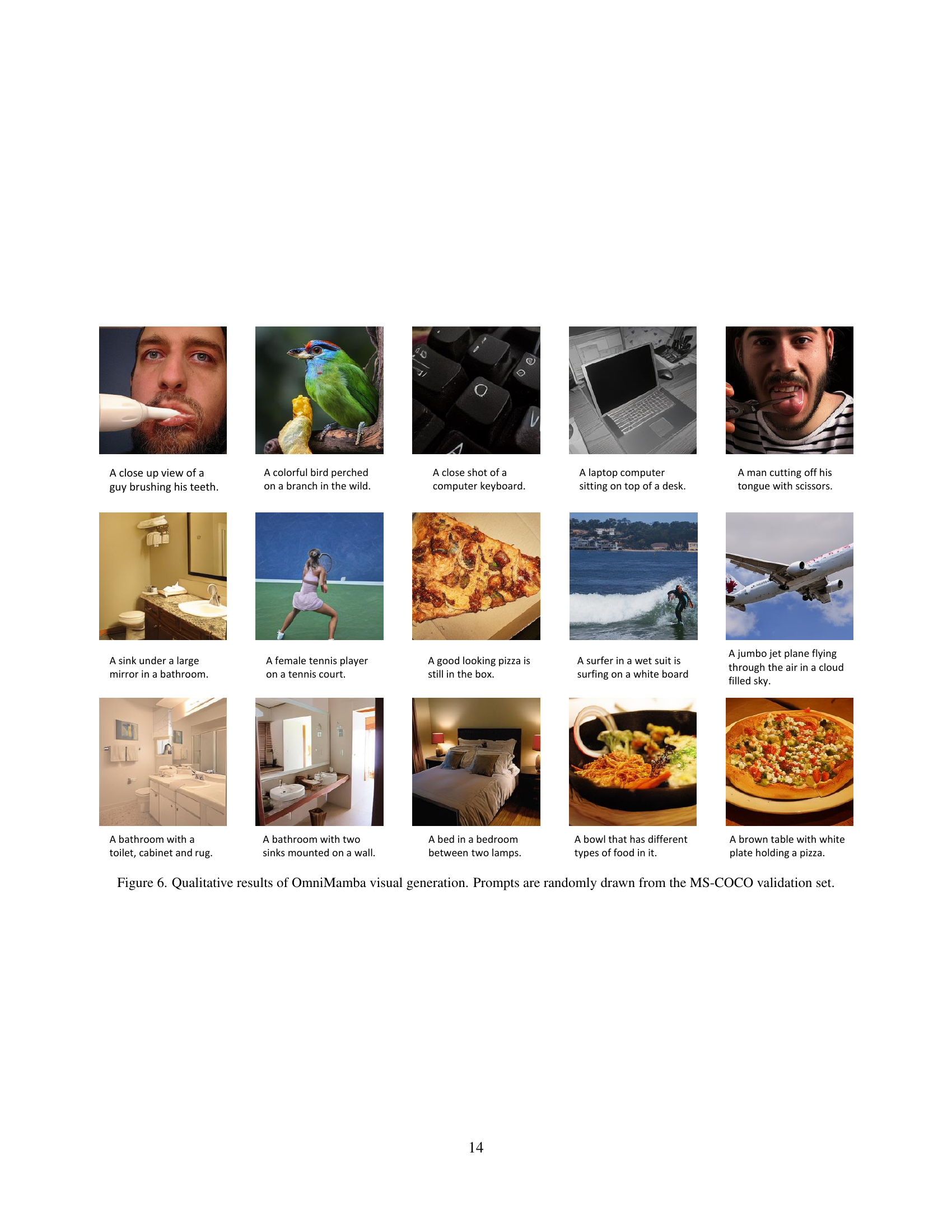

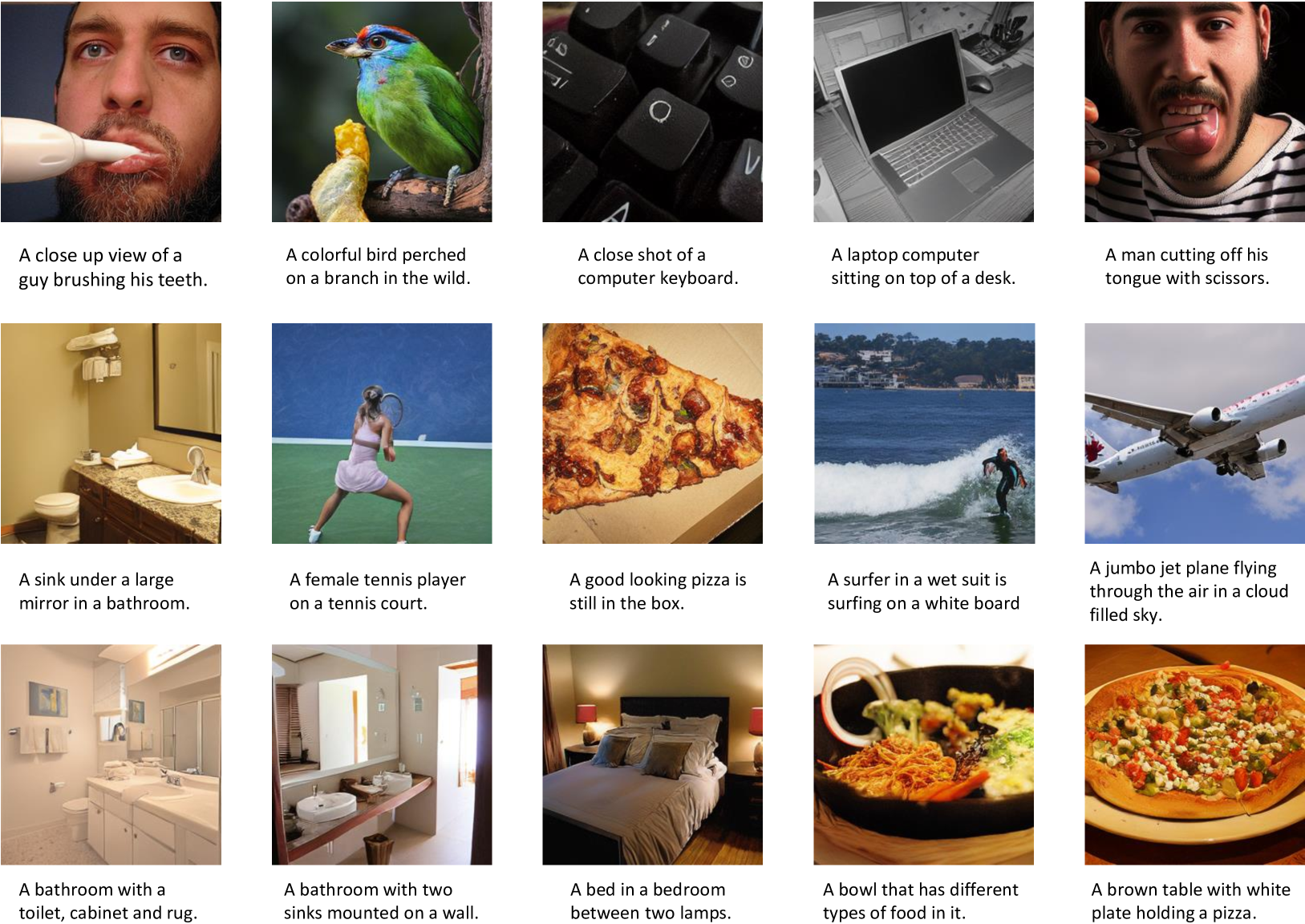

🔼 Figure 5 showcases example outputs from OmniMamba, demonstrating its capabilities in both multimodal understanding and visual generation. The top row presents prompts (user requests) for image description and image generation tasks. The bottom row displays OmniMamba’s corresponding responses. The multimodal understanding examples illustrate the model’s ability to accurately and comprehensively describe images. The visual generation examples demonstrate the model’s capacity to produce images aligned with the given prompts.

read the caption

Figure 5: Qualitative results of OmniMamba on multimodal understanding and visual generation.

More on tables

| Type | Model | Params | Images | FID-30K |

|---|---|---|---|---|

| Gen. Only | DALL·E [53] | 12B | 250M | 27.5 |

| GLIDE [47] | 5B | 250M | 12.24 | |

| DALL·E 2 [52] | 6.5B | 650M | 10.39 | |

| SDv1.5 [54] | 0.9B | 2000M | 9.62 | |

| PixArt [3] | 0.6B | 25M | 7.32 | |

| Imagen [55] | 7B | 960M | 7.27 | |

| Parti [69] | 20B | 4.8B | 7.23 | |

| Re-Imagen [4] | 2.5B | 50M | 6.88 | |

| U-ViT [1] | 45M | 83k(coco) | 5.95 | |

| Unified | CoDI [57] | - | 400M | 22.26 |

| SEED-X [20] | 17B | - | 14.99 | |

| LWM [44] | 7B | - | 12.68 | |

| DreamLLM [13] | 7B | - | 8.76 | |

| Show-o [67] | 1.3B | 35M | 9.24 | |

| OmniMamba | 1.3B | 83k(coco) | 5.50 |

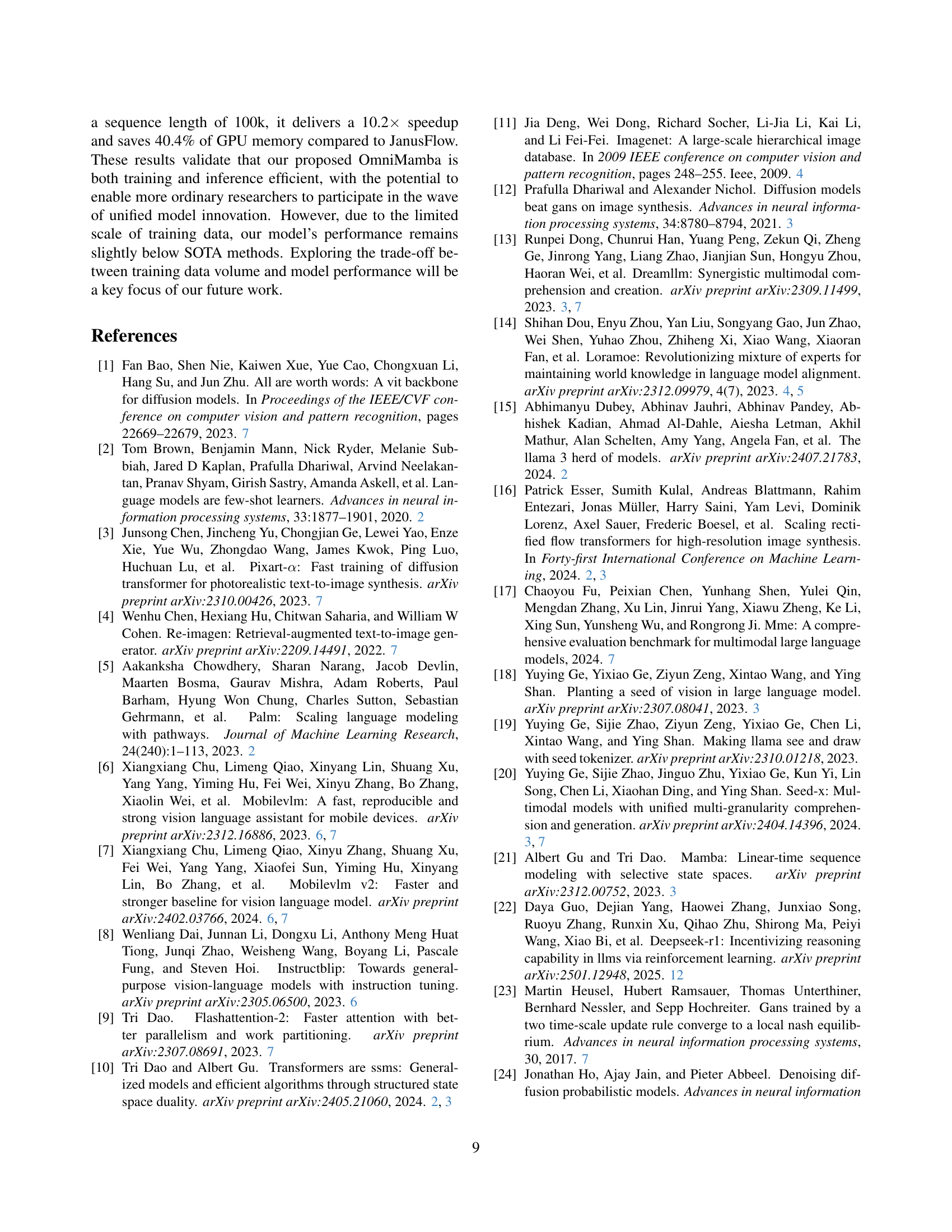

🔼 This table compares the visual generation capabilities of various models on the MS-COCO validation dataset. It separates models into two categories: those focused solely on visual generation (‘Gen. only’) and those that perform both visual generation and multimodal understanding (‘Unified’). The comparison is based on the FID (Fréchet Inception Distance) score, a lower score indicating better image quality. The table shows the model name, number of parameters, the number of images used for training, and the FID-30K score achieved.

read the caption

Table 2: Compare visual generation capability with other methods on MS-COCO validation dataset. “Gen. only” refers to models that only perform visual generation task, while “Unified” refers to models that unify both multimodal understanding and visual generation tasks.

🔼 This table compares the speed of image generation for three different models: OmniMamba, Show-o, and JanusFlow. The speed is measured in images per second (imgs/s) and total time taken to generate images in seconds (s). OmniMamba demonstrates a significant speed advantage, generating images 7 times faster than Show-o and 5.6 times faster than JanusFlow.

read the caption

Table 3: Image Generation Speed in Visual Generation Task. OmniMamba achieves 7.0 ×\times× faster image generation speed compared to Show-o and 5.6 ×\times× faster compared to JanusFlow.

| #Exp | Decoupling Vocabularies | Task Specific LoRA | POPE | MME | GQA | FID-30K |

|---|---|---|---|---|---|---|

| 1 | ✗ | ✓ | 80.8 | 1036 | 53.6 | 19.1 |

| 2 | ✓ | ✗ | 81.2 | 1003 | 54.0 | 14.4 |

| 3 | ✓ | ✓ | 81.9 | 1100 | 55.3 | 10.3 |

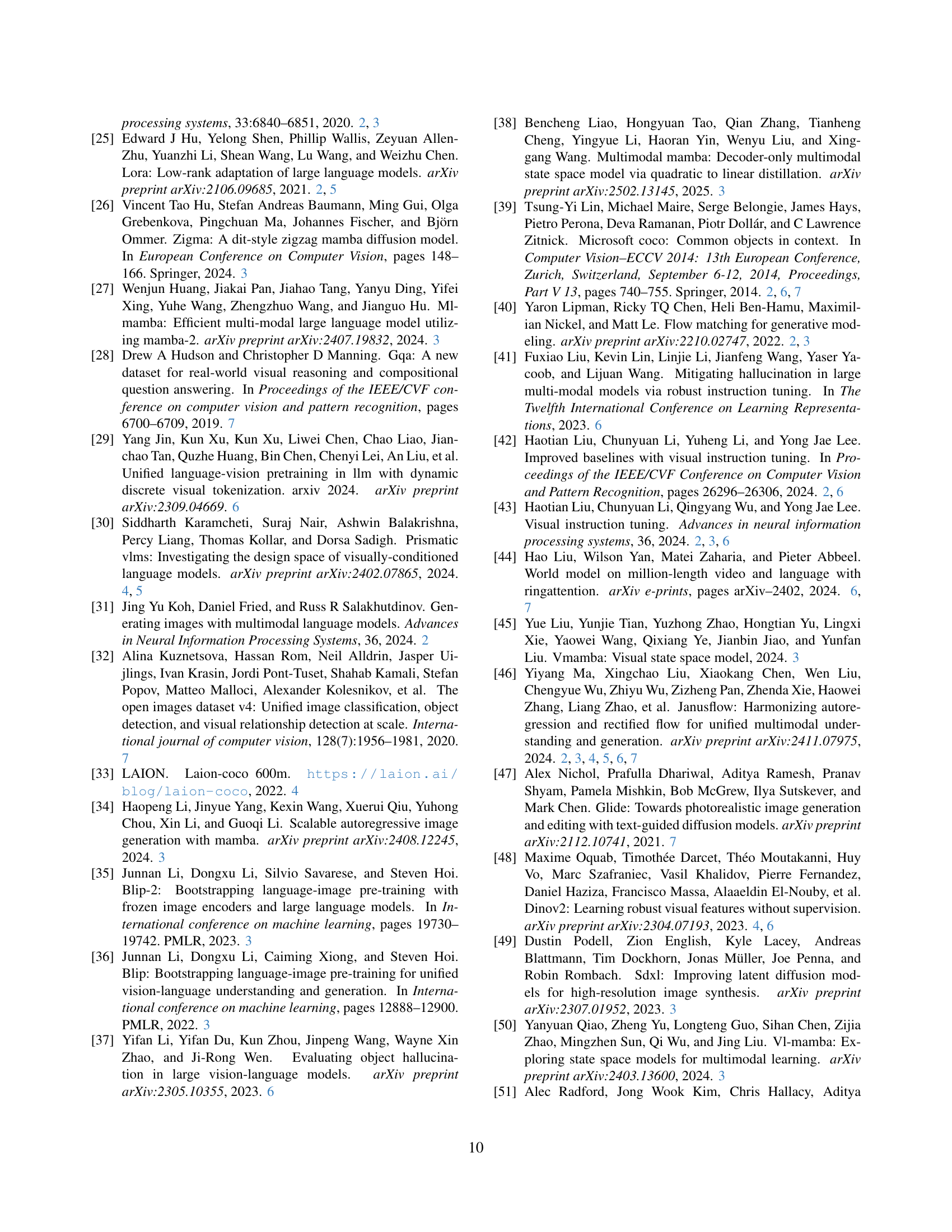

🔼 This table presents the results of ablation studies conducted on OmniMamba to evaluate the impact of two key design choices: using separate vocabularies for multimodal understanding and visual generation tasks, and employing task-specific Low-Rank Adaptation (LoRA). The table shows the performance metrics (POPE, MME, GQA, and FID-30K) achieved under different experimental conditions, enabling a comparison of the model’s performance with and without each of these design choices, demonstrating their individual and combined effects on the overall effectiveness of OmniMamba.

read the caption

Table 4: Ablation studies on decoupling Vocabularies and task specific LoRA in OmniMamba

| Stage 1: MMU | Stage 1: T2I | Stage 2: Unify | |

| Learning Rate | 1e-3 | 8e-4 | 1e-4 |

| Warm-up Steps | 100 | 1000 | 0 |

| Training Steps | 5k | 100k | 150k |

| Batch Size | 256:0 | 0:720 | 3:48 |

🔼 Table 5 details the hyperparameters used during the training of the OmniMamba model. It breaks down the settings for three distinct training stages: MMU pre-training (multimodal understanding), T2I pre-training (text-to-image), and unified fine-tuning. For each stage, it lists the learning rate, warm-up steps (the initial period of training with a gradually increasing learning rate), total number of training steps, and the batch size ratio. The batch size ratio is a crucial parameter indicating the proportion of training data allocated to multimodal understanding versus image generation. This ratio reflects a strategy to address the imbalance in the volume of data available for each task. The table provides a precise and comprehensive view of the training configuration, enabling reproducibility and facilitating analysis of the impact of hyperparameters on model performance.

read the caption

Table 5: Hyper-parameters of OmniMamba. The batch size ratio refers to the proportion between multimodal understanding data and visual generation data.

| Spatial Reasoning: | |

|---|---|

![[Uncaptioned image]](extracted/6270528/figure/dog_bicycle.jpg) | |

| User | Is the bicycle parked to the right of the dog in this image? |

| OmniMamba | The bicycle is actually parked to the left of the dog, not to the right. |

| Show-o | Yes, the bicycle is parked to the left of the dog. |

| JanusFlow | No. |

🔼 Table 6 presents a comparison of three different vision-language models (OmniMamba, JanusFlow, and Show-o) on a spatial reasoning task. The task involves determining the relative spatial positions of objects within an image. The results show that OmniMamba correctly identifies the spatial relationship, while JanusFlow provides a correct but overly brief response. Show-o, however, fails to demonstrate any understanding of the spatial relationships, highlighting the superior spatial reasoning capabilities of OmniMamba.

read the caption

Table 6: OmniMamba can accurately determine spatial positions, JanusFlow made the correct judgment, but its response was overly concise, while Show-o lacks spatial reasoning capabilities, as indicated by the red text.

| Counting with Tricky Questions: | |

|---|---|

![[Uncaptioned image]](extracted/6270528/figure/sheep.jpg) | |

| User | The animals in the picture look like cows. How many are there? |

| OmniMamba | There are three goats in the image, not cows. |

| Show-o | There are three cows in the image. |

| JanusFlow | There are three animals in the picture. |

🔼 Table 7 shows a comparison of three different models’ responses to a question designed to test their ability to count objects while avoiding misleading information. OmniMamba correctly identifies the number of goats in the image and avoids the trap of the question phrasing, demonstrating accurate counting ability and resistance to misleading information. In contrast, Show-o correctly counts the objects but is misled by the question’s phrasing, showcasing vulnerability to deceptive questioning. JanusFlow’s answer lacks precision and isn’t fully accurate.

read the caption

Table 7: OmniMamba correctly outputs the quantity while avoiding the user’s trap question, whereas Show-o, despite counting correctly, was misled by the question, as indicated by the red text. On the other hand, JanusFlow’s response was not accurate enough.

| Scene Description: | |

|---|---|

![[Uncaptioned image]](extracted/6270528/figure/dog_and_cat.png) | |

| User | Please describe it in detail. |

| OmniMamba | The image depicts a serene scene of a cat and a dog sharing a peaceful moment together. The cat, exhibiting a gray and white coat, is curled up comfortably, its head resting on the dog’s body. The dog, with its brown fur, is curled up in a similar position, with its head resting on the cat’s back. Their eyes are gently closed, signifying a deep sleep. The background is a blurred white, allowing the focus to remain on the sleeping feline and its canine friend. The image exudes warmth and affection, showcasing the close bond between the two animals. |

| Show-o | The image features a white and brown cat lying on a bed, curled up and sleeping. The cat is resting its head on a stuffed animal, which is a teddy bear. The teddy bear is positioned on the right side of the cat, with its head resting on the cat’s back. The scene captures a peaceful and cozy moment between the cat and the stuffed animal. |

🔼 Table 8 presents a qualitative comparison of scene descriptions generated by OmniMamba and Show-o. OmniMamba accurately describes a scene depicting a cat and dog sleeping together, correctly identifying their colors and postures. In contrast, Show-o makes errors, misidentifying the dog as a teddy bear and incorrectly describing the cat’s coloring. The caption highlights OmniMamba’s superior performance in accurately interpreting and describing visual scenes.

read the caption

Table 8: OmniMamba can accurately describe the information in the scene, whereas Show-o made a mistake about the color of the cat and misidentified the dog as a teddy bear, as indicated by the red text.

Full paper#