↗ OpenReview ↗ NeurIPS Proc. ↗ Chat

TL;DR#

Learning margin halfspaces with Massart noise (bounded label noise) is a fundamental problem in machine learning, yet computationally efficient algorithms have fallen short of the theoretical sample complexity limits. Prior works suggested a quadratic dependence on 1/ε for efficient algorithms. The challenge is in achieving low error (η+ε) with noise rate η<1/2 and margin γ efficiently.

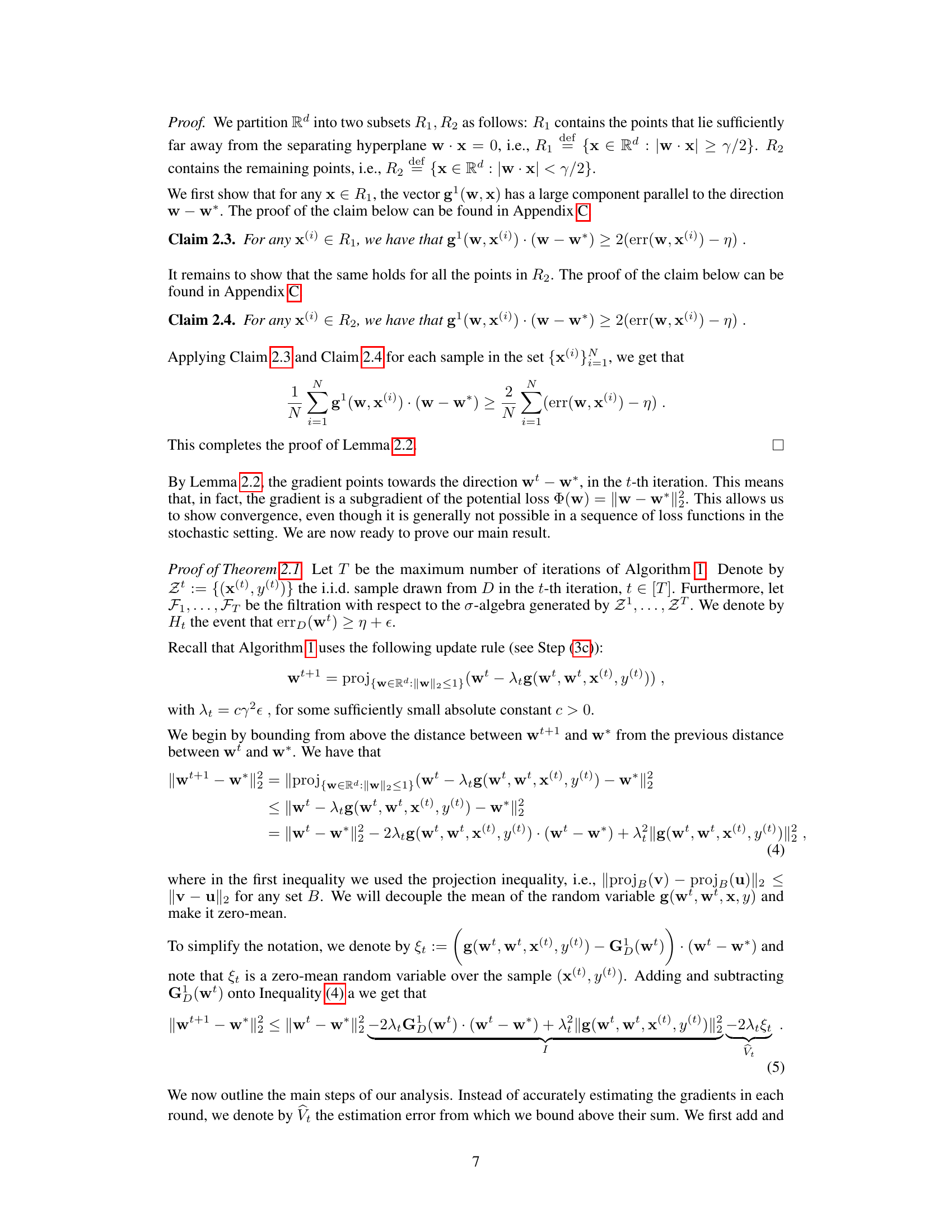

This paper introduces a new, computationally efficient algorithm that addresses these issues. It cleverly uses online stochastic gradient descent (SGD) on a carefully chosen sequence of convex loss functions to learn the halfspace. The algorithm’s sample complexity is nearly optimal, matching the theoretical lower bound and improving upon existing efficient algorithms. This offers a practical and nearly optimal solution to a longstanding challenge.

Key Takeaways#

Why does it matter?#

This paper is crucial because it presents a computationally efficient algorithm that nearly matches the theoretical lower bound for learning margin halfspaces with Massart noise. This significantly advances our understanding of the inherent trade-offs between computation and information in machine learning, offering practical solutions for real-world problems involving noisy data and high dimensionality.

Visual Insights#

Full paper#